The Child Mind Institute, a leading independent nonprofit dedicated to transforming the lives of children and families struggling with mental health and learning disorders, has officially detailed the philosophical and technical framework behind Mirror, its proprietary digital journaling tool. Positioned not as a replacement for clinical intervention but as a sophisticated "bridge" to human connection, Mirror represents a significant departure from the prevailing "engagement-first" model of the technology industry. By prioritizing clinical validation and safety over user retention, the Institute is attempting to set a new ethical benchmark for the deployment of artificial intelligence in sensitive health contexts.

The development of Mirror comes at a critical juncture for global mental health. According to data from the Centers for Disease Control and Prevention (CDC), nearly one in three adolescent girls in the United States reported seriously considering suicide in 2021, a 60% increase over the previous decade. With a chronic shortage of mental health professionals—nearly half of the U.S. population lives in a mental health professional shortage area—digital tools have often been touted as the solution. However, the Child Mind Institute argues that many existing tools prioritize "moving fast and breaking things," an approach they believe is fundamentally incompatible with the nuances of psychological care.

The Architecture of Human-Centered AI

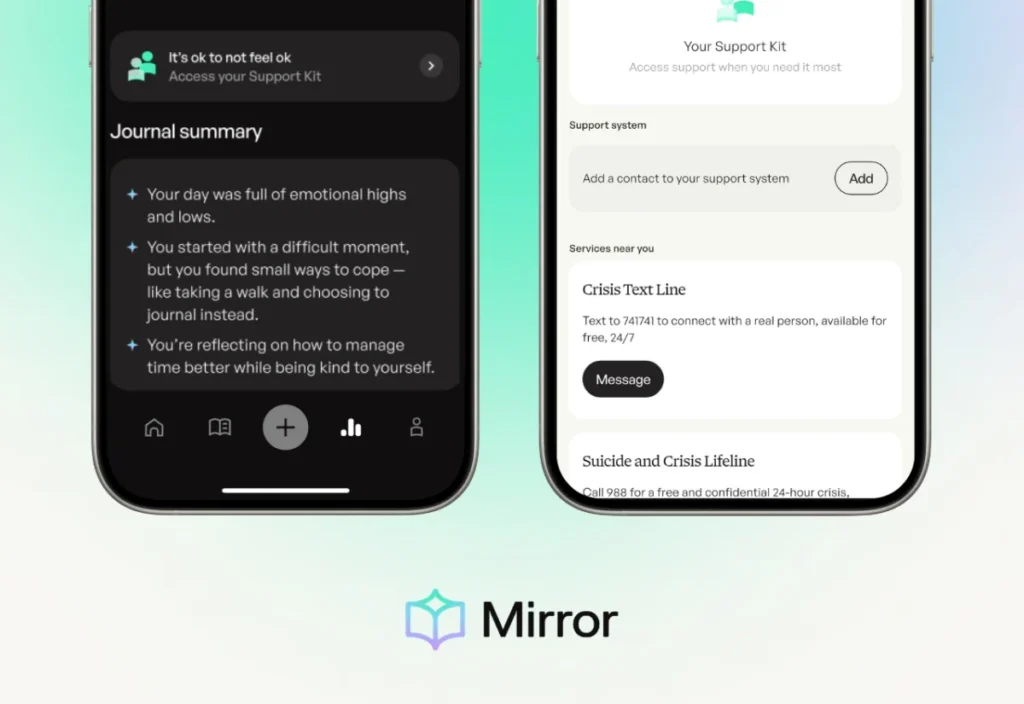

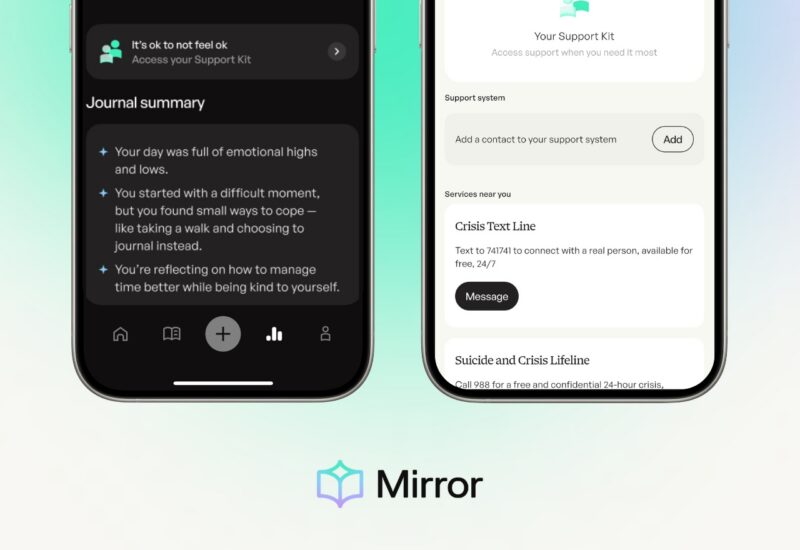

At its core, Mirror is a journaling platform that utilizes generative AI to provide users with reflections, summaries, and "remixes" of their thoughts. However, the Child Mind Institute emphasizes that the technology’s primary role is to help users organize their internal experiences so they can better articulate them to a therapist, caregiver, or peer. The underlying philosophy rejects the notion that an algorithm can empathize. Instead, the AI functions as a mirror—reflecting language and identifying patterns without attempting to simulate a human soul or provide a diagnosis.

The tool’s functionality is built upon what the Institute calls "architectural privacy" and "clinical sensitivity." Unlike standard consumer AI products that may prioritize fluid conversation, Mirror is designed with explicit constraints. When the system detects markers of sadness or hopelessness, it does not attempt to "cheer up" the user. Instead, it classifies the entry internally to ensure that the user is offered direct links to clinical resources. This design choice reflects a commitment to safety over sophisticated dialogue, ensuring that the AI does not inadvertently minimize a user’s distress through generic platitudes.

Chronology of Development and the Shift Toward On-Device Processing

The development of Mirror is part of a broader multi-year initiative by the Child Mind Institute’s Applied Digital Technologies team to advance scientific discovery through innovation. The project began with the recognition that traditional journaling, while effective, often presents a barrier for youth who struggle to process complex emotions into narrative forms.

- Conceptualization Phase: Researchers identified the need for a tool that could lower the "activation energy" required for therapeutic journaling.

- Initial Integration: Early versions utilized frontier models, such as Google’s Gemini, Anthropic’s Claude, and OpenAI’s ChatGPT. These models provided the necessary linguistic sophistication but introduced challenges regarding cloud-based privacy and offline reliability.

- The Ethical Pivot: Recognizing the risks of "hallucinations" (where AI generates false or harmful information) and the privacy concerns of cloud processing, the team began transitioning toward smaller, low-parameter, open-source models.

- Current Status: Mirror is currently refining these smaller models to enable on-device processing. This move is intended to ensure that a user’s most private thoughts never leave their hardware, providing a level of security that cloud-reliant competitors cannot match.

Data and the Efficacy of Digital Journaling

The decision to focus on journaling is supported by decades of psychological research. Studies on "Expressive Writing," popularized by psychologist James Pennebaker in the 1980s, have consistently shown that translating emotional experiences into words can lead to improved immune system function, reduced blood pressure, and better mental health outcomes.

However, the Child Mind Institute’s internal data and clinical observations suggest that for adolescents, the "blank page" can be intimidating. By using AI to summarize a week’s worth of entries, Mirror provides users with a "digest" that can be brought into a therapy session. This reduces the time spent on "reporting" during expensive clinical hours, allowing the therapist and patient to move more quickly into deep work. According to industry analysis, such efficiencies could potentially increase the "therapeutic throughput" of the existing mental health workforce by 15-20% if adopted widely.

Safety as "Friction": A Reversal of Tech Industry Norms

One of the most radical aspects of Mirror’s design is the intentional use of "friction." In traditional software engineering, friction—anything that slows down the user or leads them away from the app—is considered a failure. In the context of Mirror, friction is a safety feature.

When the AI detects high-risk entries—those suggesting self-harm or acute crisis—the system is programmed to "constrain" itself. In these moments, the AI will refuse to provide reflections or "remixes." Instead, it provides what the developers call an "off-ramp." The interface changes to prioritize emergency support, offering one-click access to the 988 Suicide & Crisis Lifeline, the Crisis Text Line, and the user’s pre-designated trusted contacts.

"A machine should never ‘make the call’ or force a clinical intervention," the Institute stated in its philosophy brief. This approach respects user autonomy while ensuring that the "wellness space" of the app does not accidentally become an unregulated clinical environment. By telling users "It’s okay to not feel okay," the app validates the experience without attempting to "fix" it through code.

The "No Remix" Rule for Minors

Another key ethical boundary established by the Child Mind Institute is the "no remix" rule for users under the age of 18. The "remix" feature allows a user to see their journal entry rewritten in a different voice or format—for example, as a poem or from a different perspective. While this can provide helpful cognitive distance for adults, the Institute identified a "differential risk" for adolescents.

Adolescence is a period of rapid brain development, particularly in the prefrontal cortex, which governs critical thinking and the ability to contextualize information. The Institute’s experts expressed concern that an AI-generated remix could inadvertently validate harmful thoughts or be misinterpreted as an authoritative objective truth by a young person. By disabling this feature for minors, Mirror acknowledges that the developmental stage of the user must dictate the capabilities of the AI.

Technical Analysis and Future Implications

The move toward "low-parameter" models is perhaps the most significant technical trend highlighted by the Mirror project. While massive models like GPT-4 require enormous computing power and constant internet connectivity, low-parameter models can be optimized to run locally on a smartphone.

This shift has three major implications for the future of mental health technology:

- Privacy: If the model lives on the device, the data does not need to be sent to a corporate server, making it easier to comply with strict HIPAA (Health Insurance Portability and Accountability Act) and GDPR (General Data Protection Regulation) standards.

- Reliability: Mental health crises do not only happen when a user has a strong 5G signal. On-device AI ensures that safety features and supportive reflections are available in "airplane mode" or in remote areas.

- Transparency: Open-source models allow for greater "auditability." Independent researchers can examine the model’s weights and training data to ensure there are no hidden biases that could harm vulnerable populations.

Official Responses and Industry Context

While the Child Mind Institute is a nonprofit, its entry into the AI space has drawn attention from the broader "MedTech" industry. Analysts suggest that Mirror could serve as a "Gold Standard" for future regulations. Currently, the FDA (Food and Drug Administration) has a complex framework for "Software as a Medical Device" (SaMD), but many wellness apps fall into a regulatory gray area.

Clinicians have generally responded with cautious optimism. Dr. Elena Rossi, a child psychologist not affiliated with the project, noted, "The danger of many AI tools is that they create an ’empathy trap’ where the user feels heard by a machine and stops seeking human help. The Child Mind Institute’s focus on using AI as a ‘bridge’ rather than a ‘destination’ is exactly the direction the field needs to take."

Conclusion: The Limits of Artificial Intelligence

The Child Mind Institute concludes its philosophy by asserting that the most advanced AI is the one that knows its limits. As Mirror moves toward broader implementation, it serves as a reminder that in the realm of mental health, technology is most effective when it is invisible—acting as a conduit for the human relationships that remain the primary engine of healing.

The Institute remains committed to a process of continual assessment and refinement. By rejecting the "engagement" metrics that define Silicon Valley, Mirror seeks to prove that a digital tool’s success should be measured not by how long a user stays in the app, but by how well-equipped they are to leave it and engage with the real world. For those currently struggling, the Institute reinforces that resources like 988 and the Crisis Text Line are available 24/7, and that Mirror is designed to make reaching those human lifelines easier, not to stand in their way.