A groundbreaking new study, spearheaded by Professor Karim Jerbi from the Department of Psychology at the Université de Montréal and featuring the insights of renowned AI pioneer Yoshua Bengio, has delivered a definitive answer to a question increasingly pondered across industries and academic circles: Can generative artificial intelligence systems, such as ChatGPT, truly generate original ideas? This extensive research, representing the largest direct comparison ever conducted between human creativity and that of large language models (LLMs), reveals a significant shift in the capabilities of AI while simultaneously reaffirming the unparalleled potential of top human minds.

Published in Scientific Reports, a prestigious journal within the Nature Portfolio, the findings indicate that generative AI systems have achieved a remarkable level of sophistication, now capable of outperforming the average human on specific measures of creativity. This marks a pivotal moment in the ongoing evolution of AI. However, the study also provides a crucial counterpoint: the most creative individuals within the human cohort consistently demonstrate a clear and substantial advantage over even the most advanced AI models currently available.

The Evolving Landscape of AI Creativity: A Historical Context

For decades, the concept of artificial intelligence possessing genuine creativity was largely confined to the realm of science fiction. Early AI systems, built on rule-based logic and deterministic algorithms, were primarily tools for calculation, data processing, and automation. Their outputs, while efficient, lacked the spontaneity, intuition, and divergent thinking characteristic of human creativity. The prevailing view was that creativity—the ability to generate novel and valuable ideas—was intrinsically linked to consciousness, emotion, and lived experience, elements thought to be beyond the grasp of machines.

However, the advent of machine learning, particularly deep learning, began to challenge these assumptions. The development of neural networks capable of identifying complex patterns in vast datasets paved the way for generative AI. Systems like AlphaGo demonstrated impressive strategic creativity in games, but it was the explosion of Large Language Models (LLMs) in the 2020s that truly brought the debate about AI creativity into the mainstream. Models such as GPT-3, and subsequently GPT-4, Claude, and Gemini, showcased an unprecedented ability to generate coherent, contextually relevant, and even surprisingly original text, images, and code. Suddenly, AI wasn’t just processing information; it was creating it.

This rapid advancement intensified the need for rigorous, empirical studies to assess AI’s creative capacities against a human benchmark. Previous research often focused on qualitative comparisons or smaller-scale tests, leading to anecdotal evidence rather than statistically robust conclusions. The Université de Montréal study sought to fill this gap, providing a comprehensive, data-driven framework to objectively compare human and machine creativity at an unprecedented scale.

A Landmark Study: Methodology and Scale

To conduct this ambitious comparison, Professor Karim Jerbi’s team, with the vital participation of co-first authors postdoctoral researcher Antoine Bellemare-Pépin (Université de Montréal) and PhD candidate François Lespinasse (Université Concordia), evaluated several leading large language models, including GPT-4, Claude, Gemini, and others. Their performance was then juxtaposed against the results gathered from an astonishingly large pool of over 100,000 human participants. This sheer volume of human data is critical, lending immense statistical power and generalizability to the study’s conclusions.

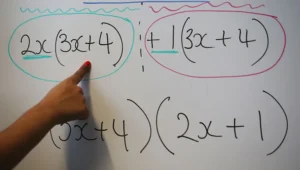

The primary instrument for evaluating divergent linguistic creativity was the Divergent Association Task (DAT), a psychological test specifically designed to measure an individual’s capacity to generate diverse and original ideas from a single prompt. Developed by study co-author Jay Olson from the University of Toronto, the DAT is a robust and widely recognized measure within cognitive psychology. It asks participants, whether human or AI, to list ten words that are as semantically unrelated as possible. The challenge lies not just in listing distinct words, but in selecting words whose meanings are truly far apart, thereby demonstrating a broad and flexible cognitive search.

For instance, a highly creative response might include words like "galaxy, fork, freedom, algae, harmonica, quantum, nostalgia, velvet, hurricane, photosynthesis." Each word evokes a different domain, a different sensory experience, a different conceptual space, minimizing semantic overlap. In contrast, a less creative response might list words like "cat, dog, mouse, bird, fish, hamster, snake, lizard, frog, rabbit," which, while distinct animals, all belong to the same narrow semantic category. The DAT’s elegance lies in its simplicity yet its profound ability to tap into deeper cognitive processes involved in creative thinking across various domains, not just vocabulary. Performance on this task has been shown to correlate strongly with results on other established creativity tests used in fields such as writing, idea generation, and creative problem-solving. Its practical advantages, including its quick completion time (two to four minutes) and online accessibility, were instrumental in gathering such a large dataset from the general public.

Beyond the quantifiable metrics of the DAT, the researchers extended their investigation into more complex and qualitative creative activities. To ascertain whether AI’s success in simple word association could translate to more realistic creative endeavors, they challenged both AI systems and human participants with creative writing tasks. These included composing haiku (a traditional three-line poetic form with specific syllable counts, demanding conciseness and evocative imagery), writing concise movie plot summaries that capture originality and intrigue, and producing short stories. These tasks require not just linguistic fluency but also narrative coherence, thematic depth, and imaginative flair, providing a broader test of generative capability.

AI Reaches Average Human Creativity Levels: A Turning Point

The findings from the DAT delivered a clear and, for many, startling revelation. "Our study shows that some AI systems based on large language models can now outperform average human creativity on well-defined tasks," explains Professor Karim Jerbi. Specifically, models such as GPT-4, developed by OpenAI, demonstrated scores on the DAT that exceeded the average human participant. This signifies a genuine turning point: AI is no longer merely imitating human creativity; in certain quantifiable aspects, it has achieved parity, and even superiority, over the statistical average of human performance.

This result, as Professor Jerbi acknowledges, "may be surprising—even unsettling." The idea that a machine could be "more creative" than a typical person challenges long-held notions about human uniqueness and intellectual dominance. For years, creativity was considered a bastion of human intellect, a quality that set us apart from machines. The study’s data, however, necessitates a re-evaluation of this perception, at least concerning certain forms of divergent thinking. The implications for education, professional skills, and our understanding of intelligence are profound.

The Enduring Edge: Peak Human Creativity Remains Unmatched

Despite AI’s impressive ascent, the study provides an equally significant, and perhaps more reassuring, observation: "even the best AI systems still fall short of the levels reached by the most creative humans." The detailed analysis conducted by Bellemare-Pépin and Lespinasse revealed a striking pattern: while some AI models now surpass the average person, peak creativity remains firmly within the human domain.

When researchers analyzed the most creative half of the human participants (the top 50th percentile), their average scores on the DAT consistently surpassed those of every AI model tested. The gap widened even further when focusing on the top 10 percent of the most creative individuals. These elite human minds demonstrated a capacity for generating uniquely diverse and unrelated concepts that AI, even with its vast training data and sophisticated algorithms, could not replicate. This suggests that while AI can mimic and even exceed average performance, the truly exceptional, boundary-pushing, and profoundly original creative leaps still originate from the human brain.

This pattern was not limited to the DAT. In the more complex creative writing challenges—composing haiku, crafting movie plot summaries, and writing short stories—a similar trend emerged. While AI systems occasionally produced outputs that exceeded the performance of average humans in terms of technical competence or even basic originality, the most skilled human creators consistently delivered work that was not only stronger in quality but also more nuanced, emotionally resonant, and genuinely original in its conceptualization. Human judges, assessing these qualitative outputs, could discern the "human touch" that AI struggled to emulate at the highest levels.

The Tunable Nature of AI Creativity: Temperature and Prompt Engineering

One of the study’s fascinating secondary findings addressed the question of AI creativity’s malleability: Is it a fixed attribute, or can it be influenced and shaped? The research unequivocally demonstrated that AI creativity is indeed adjustable, primarily through two key mechanisms: the model’s "temperature" setting and sophisticated prompt engineering.

The "temperature" parameter in generative AI models acts as a dial for predictability versus adventurousness in output. At lower temperature settings, the model adheres more closely to its learned patterns, producing safer, more conventional, and highly probable responses. This is akin to a conservative approach, minimizing novelty in favor of coherence. Conversely, raising the temperature encourages the model to explore less probable word choices and conceptual associations. Responses become more varied, less predictable, and more exploratory, allowing the system to deviate from familiar ideas and potentially generate more original content. For example, a low-temperature setting might yield a movie plot summary that is generic and predictable, while a high-temperature setting could generate a summary with unexpected twists or genre-bending elements. This ability to tune creativity offers significant potential for users to guide AI towards specific creative outcomes, from conventional to avant-garde.

Furthermore, the study highlighted the profound impact of "prompt engineering"—how instructions are formulated and presented to the AI. Prompts that explicitly encouraged models to engage in deeper cognitive processes or adopt unconventional perspectives led to significantly higher creativity scores. For instance, instructing models to "think about word origins and structure using etymology" resulted in more unexpected and divergent associations. By prompting the AI to consider the historical roots and semantic evolution of words, it was nudged towards less obvious connections. Similarly, prompts like "Generate ten wildly disparate concepts that challenge conventional thinking" or "Imagine you are an artist attempting to redefine beauty, list your inspirations" can push AI beyond its default settings. These results underscore a critical point: AI creativity is not an autonomous function but a collaborative one. Human guidance, through skilled prompting and parameter adjustment, remains central to unlocking and shaping AI’s creative potential.

Implications for Creative Industries and the Future of Work

The study offers a nuanced and balanced perspective on the widespread anxieties regarding artificial intelligence potentially displacing creative professionals. While AI systems can now match or exceed average human creativity on specific tasks, their inherent limitations and reliance on human direction suggest a future of augmentation rather than replacement.

"Even though AI can now reach human-level creativity on certain tests, we need to move beyond this misleading sense of competition," asserts Professor Karim Jerbi. He argues that "Generative AI has above all become an extremely powerful tool in the service of human creativity: it will not replace creators, but profoundly transform how they imagine, explore, and create—for those who choose to use it."

This vision positions AI not as a competitor, but as a powerful creative assistant. For writers, AI can act as a brainstorming partner, generating initial concepts, plot twists, or character profiles. For designers, it can rapidly produce countless variations of a logo, color scheme, or layout, freeing up human designers to focus on refinement and conceptual oversight. Musicians can leverage AI to explore new melodic structures or harmonic progressions. The technology has the potential to democratize creativity, lowering the barrier to entry for individuals who may lack certain technical skills but possess strong conceptual ideas.

Economically, this shift implies the emergence of new job roles such as AI prompt engineers, AI ethicists, and AI-assisted creative directors. Productivity gains in creative fields are likely, but these gains will be realized by those who embrace AI as a tool, integrating it into their workflows. Intellectual property and copyright concerns, while not directly addressed by this study’s findings, remain a significant implication, necessitating new legal frameworks and ethical guidelines for AI-generated content.

Ultimately, the study compels a re-evaluation of the very definition of creativity itself. "By directly confronting human and machine capabilities, studies like ours push us to rethink what we mean by creativity," concludes Professor Karim Jerbi. If AI can generate novel ideas, does that automatically equate to "creativity" in the human sense, which often involves intent, consciousness, emotional depth, and a unique worldview shaped by lived experience? The research highlights that while AI excels at generating novelty based on patterns learned from vast datasets, the originality that stems from profound human insight, intuition, and subjective experience remains a distinct and superior quality at the highest echelons of creative output.

About the Study and Future Directions

The pivotal paper, titled "Divergent creativity in humans and large language models," was officially published in Scientific Reports on January 21, 2026. This extensive collaborative effort brought together leading scientists from several esteemed institutions, including the Université de Montréal, Université Concordia, University of Toronto Mississauga, Mila (Quebec AI Institute), and Google DeepMind.

Professor Karim Jerbi, an associate professor at Mila, led the multidisciplinary research team. Antoine Bellemare-Pépin of the Université de Montréal and Franҫois Lespinasse of Université Concordia served as the instrumental co-first authors, driving the intricate analysis. The research also benefited significantly from the participation of Yoshua Bengio, the visionary founder of Mila and LoiZéero, and a recognized pioneer in deep learning—the foundational technology underpinning modern AI systems like ChatGPT. His involvement underscores the study’s scientific rigor and its potential impact on the future trajectory of AI research.

This landmark study not only provides concrete answers to pressing questions about AI’s creative capacities but also opens new avenues for future research. Scientists will undoubtedly delve deeper into understanding the cognitive mechanisms behind human and AI creativity, exploring how different AI architectures influence creative output, and developing more sophisticated methods for evaluating nuanced forms of creativity. As AI continues its rapid evolution, studies like this will be crucial in guiding its development responsibly and harnessing its power to augment, rather than diminish, human potential across all creative domains.