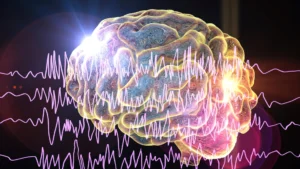

Researchers at the Skolkovo Institute of Science and Technology (Skoltech) have unveiled a groundbreaking mathematical model exploring the intricate mechanisms of memory, yielding profound insights that could fundamentally alter the development of artificial intelligence, robotics, and our understanding of human cognition. Published in the esteemed journal Scientific Reports, their findings propose a startling hypothesis: there might be an optimal number of sensory inputs—specifically seven—to maximize the capacity for storing distinct concepts in memory, hinting that humanity’s traditional five senses might be insufficient for peak cognitive efficiency. This revelation challenges long-held assumptions about perception and memory, setting the stage for a new era of interdisciplinary research at the nexus of neuroscience, computer science, and theoretical physics.

The Genesis of the Discovery: Modeling Memory Engrams

Skoltech, a young yet rapidly influential scientific and technological university located in Skolkovo, Russia, has quickly established itself as a hub for cutting-edge, interdisciplinary research aimed at addressing critical global challenges. Its AI research faculty, in particular, has been at the forefront of developing theoretical frameworks that bridge the gap between biological intelligence and artificial systems. This latest study exemplifies Skoltech’s innovative approach, delving into one of the most enigmatic phenomena of the biological world: memory.

The research team embarked on a journey rooted in a century-old scientific tradition, focusing on the fundamental units of memory known as "engrams." The concept of an engram was first proposed by the German zoologist Richard Semon in the early 20th century, describing a hypothetical persistent change in the brain resulting from a stimulus, representing the physical substrate of memory. While Semon’s ideas were initially theoretical, modern neuroscience, particularly through advances in neuroimaging and optogenetics, has begun to uncover the neural correlates of engrams, identifying them as sparse collections of neurons distributed across various brain regions that fire synchronously when a particular memory is recalled or reinforced.

In the Skoltech model, an engram is conceptualized as representing a specific concept, characterized by a unique set of features. For humans, these features are intrinsically linked to sensory experiences. Consider the concept of a banana: it is defined by its yellow appearance, distinct curved shape, sweet smell, soft texture, and specific taste. Each of these sensory attributes contributes a dimension to the "conceptual space" in which the banana—and indeed all other memories—resides. Thus, within this framework, a banana effectively becomes a five-dimensional object, existing alongside countless other multi-dimensional memories within the vast mental landscape of the brain. This elegant abstraction allows for the application of rigorous mathematical tools to explore the dynamics and capacity of memory.

Moreover, the model accounts for the dynamic nature of memory. Engrams are not static entities; they evolve over time. Their clarity and distinctness can sharpen with repeated exposure and reinforcement, or conversely, become more diffuse and harder to access if not frequently triggered by sensory input from the outside world. This process mirrors the everyday human experiences of learning and forgetting, representing the continuous interaction between our internal cognitive structures and the external environment.

The Seven-Dimensional Revelation: Optimal Memory Capacity

The crux of the Skoltech team’s groundbreaking discovery lies in their mathematical demonstration of how these engrams evolve towards a stable, "steady state." Professor Nikolay Brilliantov of Skoltech AI, a co-author of the study, elaborated on this crucial finding: "We have mathematically demonstrated that the engrams in the conceptual space tend to evolve toward a steady state, which means that after some transient period, a ‘mature’ distribution of engrams emerges, which then persists in time." This implies that our memories, after an initial period of formation and refinement, settle into a relatively stable configuration within our conceptual space.

It was during the analysis of the ultimate capacity of this conceptual space that the researchers stumbled upon their most surprising result. "As we consider the ultimate capacity of a conceptual space of a given number of dimensions, we somewhat surprisingly find that the number of distinct engrams stored in memory in the steady state is the greatest for a concept space of seven dimensions. Hence the seven senses claim," Brilliantov explained.

In simpler terms, if one were to define objects in the world using a finite number of features, which correspond to the dimensions of a conceptual space, and the goal was to maximize the number of distinct concepts that could be retained within that space, the optimal dimension would be seven. A greater capacity in this conceptual space translates directly to a deeper and more nuanced understanding of the world, as it allows for the differentiation and storage of a wider array of unique experiences and information. The mathematical model robustly indicated that this maximum capacity is achieved precisely when the conceptual space operates with seven dimensions. This leads directly to the researchers’ compelling conclusion that seven is the optimal number of senses for maximizing memory capacity.

A critical aspect highlighted by the researchers is the robustness of this numerical finding. According to their analysis, the optimal number of seven dimensions does not depend on the specific intricacies of the model, such as the precise properties of the conceptual space or the exact nature of the stimuli providing sensory impressions. This suggests that the number seven is an inherent and persistent feature related to the intrinsic structure and dynamics of memory engrams themselves. One important caveat noted by the researchers is that when calculating memory capacity, multiple engrams of differing sizes but existing around a common conceptual center are treated as representing similar concepts and are therefore counted as a single distinct memory. This methodology ensures that the calculation focuses on the uniqueness of concepts rather than mere variations.

Historical Context and Related Research

The notion of "senses" extends far beyond the traditional five (sight, hearing, touch, taste, smell) that we are commonly taught. Neuroscientists and cognitive psychologists have long acknowledged that humans possess a much richer sensory palette. For instance, proprioception is the sense of the relative position of one’s own body parts and strength of effort being used in movement. Nociception refers to the perception of pain. Thermoception is the sense of temperature, and chronoception is the perception of the passage of time. Even internal states like hunger, thirst, and balance are mediated by distinct sensory pathways. Therefore, the Skoltech model’s suggestion of an optimal "seven senses" doesn’t necessarily mean adding two new senses to the traditional five, but rather optimizing the number of features used to characterize concepts, which could be drawn from a broader pool of existing or even novel sensory modalities.

Historically, the study of memory has been a cornerstone of psychology and neuroscience. From Hermann Ebbinghaus’s pioneering work on the forgetting curve in the late 19th century to Karl Lashley’s search for the "engram" in the mid-20th century, researchers have continually sought to unravel the mysteries of how information is encoded, stored, and retrieved. Wilder Penfield’s surgical explorations of the brain in the 1940s and 50s, where he reportedly activated vivid memories by stimulating specific brain regions, further fueled the quest to localize and understand memory traces. The Skoltech model provides a novel, mathematical lens through which to view these long-standing questions, offering a quantitative framework for understanding memory capacity.

In the realm of artificial intelligence, the limitations of current memory systems are a significant hurdle. While AI models like large language models can process vast amounts of data, their "memory" is often ephemeral, lacking the associative, context-dependent, and long-term retention capabilities of biological brains. AI systems often struggle with catastrophic forgetting, where learning new information causes them to forget previously acquired knowledge. They also lack a deep, integrated "understanding" of concepts that comes from multi-sensory experiences. The Skoltech research directly addresses these challenges by proposing an optimal structural framework for memory organization.

Implications for Artificial Intelligence and Robotics

The implications of the Skoltech model for the advancement of artificial intelligence and robotics are potentially transformative. Current AI systems, particularly neural networks, process information based on the dimensionality of their input data. For a robot, this might involve integrating data from cameras (visual), microphones (auditory), lidar (spatial), and tactile sensors (haptic). The Skoltech findings suggest that optimizing the number of distinct feature types that define a concept within an AI’s cognitive architecture could dramatically enhance its ability to learn, retain, and process information efficiently.

For AI, this could mean designing neural networks that inherently organize information into a seven-dimensional conceptual space. Instead of simply feeding raw data from multiple sensors, AI architects might focus on extracting and weighting seven key feature types for each "concept" the AI needs to learn. This could lead to more robust learning algorithms, reducing instances of catastrophic forgetting and enabling AI agents to form more stable and integrated representations of the world. Imagine an AI designed to diagnose medical conditions; if it could optimally integrate seven distinct types of patient data—visual symptoms, auditory cues (e.g., cough), tactile feedback (e.g., skin texture), chemical markers (e.g., blood test results), temporal progression, spatial localization of pain, and genetic predispositions—its diagnostic accuracy and "understanding" of a patient’s condition could be vastly superior.

In robotics, the potential applications are even more tangible. Robots are increasingly equipped with sophisticated sensor arrays, but the challenge lies in effectively integrating and interpreting this disparate sensory input to build a coherent understanding of their environment. A robot designed with a seven-dimensional conceptual processing unit might be able to create richer, more stable "memories" of its surroundings. For example, an autonomous vehicle might optimally process visual data, lidar scans, radar signals, acoustic inputs, haptic feedback from the road, GPS coordinates, and even environmental chemical sensors (like detecting pollutants) to navigate and interact with its environment with unprecedented precision and adaptability. Professor Brilliantov even speculated on the possibility of future humans evolving senses for "radiation or magnetic fields," a concept equally applicable to robotic systems that could benefit from such enhanced perceptions for tasks like environmental monitoring, space exploration, or complex industrial operations. The model provides a theoretical basis for designing robotic "brains" that are inherently optimized for memory capacity and conceptual understanding.

Understanding the Human Mind: Evolutionary Perspectives and Future Cognition

While the Skoltech model is mathematical, its speculative application to human cognition opens fascinating avenues for discussion. The human brain, a product of millions of years of evolution, has developed its sensory systems and memory structures in response to specific environmental pressures. If seven dimensions are indeed optimal for memory capacity, does this imply an evolutionary constraint or a potential for further human cognitive development?

As Professor Brilliantov mused, "It could be that humans of the future would evolve a sense of radiation or magnetic field." While highly speculative, the idea challenges us to consider the flexibility of biological evolution. If environmental pressures favored the development of new sensory modalities that could enhance survival and cognitive processing—such as detecting subtle changes in electromagnetic fields for navigation or predicting natural disasters—our brains might indeed adapt to integrate these new "features" into our conceptual space, potentially leading to a richer, seven-dimensional (or more) perception of reality. This perspective enriches the ongoing debate about the nature of human consciousness and the potential for cognitive augmentation.

Beyond speculative evolution, a deeper understanding of optimal memory structures has profound implications for research into human memory disorders. Conditions like Alzheimer’s disease, various forms of dementia, and amnesia are characterized by impaired memory formation, retention, and retrieval. If these conditions involve a degradation of the conceptual space or an inefficient encoding of sensory features, then a mathematical model identifying an optimal structure could provide crucial insights. It might help researchers develop new diagnostic tools or therapeutic interventions aimed at restoring or optimizing the dimensionality of memory encoding, thereby improving the quality of life for millions affected by these debilitating diseases. The enigmatic link between memory and consciousness—the very essence of our subjective experience—also benefits from such theoretical advancements, as understanding how memory is structured and maintained is instrumental to unraveling the fundamental properties of the human mind.

Expert Reactions and Future Outlook

While specific external expert reactions to this particular Skoltech publication are not available in the original text, the significance of such a finding would undoubtedly spark considerable discussion across various scientific disciplines.

A neuroscientist might commend the theoretical elegance of the model, noting that it provides a quantitative framework for long-standing qualitative observations about memory. "This model offers a fresh perspective on how our brains might organize information," an unnamed theoretical neuroscientist might comment. "It provides a testable hypothesis for how sensory integration impacts memory encoding, potentially inspiring new experimental paradigms to explore neural circuitry beyond the traditional five senses."

An AI ethics expert could raise questions about the implications of creating AI with optimized, human-like memory structures. "As AI systems become more capable of forming and retaining complex ‘memories,’ the ethical considerations become paramount," a hypothetical AI ethicist might state. "Understanding the optimal architecture for such memory is crucial, not only for performance but also for ensuring these systems align with human values and do not develop unforeseen cognitive biases."

A roboticist, on the other hand, would likely focus on the immediate practical applications. "The idea of optimizing sensory input dimensionality for memory capacity is a game-changer for autonomous systems," a leading robotics engineer might suggest. "It provides a clear theoretical target for designing more efficient data fusion algorithms and cognitive architectures for robots, potentially leading to significant breakthroughs in fields like autonomous navigation, advanced manufacturing, and even human-robot interaction."

The Skoltech research marks a significant theoretical leap. Future work will likely involve validating these mathematical predictions through empirical studies, both in biological systems and through the development of novel AI and robotic prototypes. The team might explore how different types of learning (e.g., rote learning vs. experiential learning) influence the evolution of engrams within this optimal seven-dimensional space. Furthermore, applying this model to analyze existing neural network architectures could provide insights into their current limitations and guide the design of next-generation AI.

In conclusion, the Skoltech mathematical model offers a compelling vision of memory as an optimized system, one that might achieve its peak efficiency with a conceptual space defined by seven features. This provocative finding not only deepens our understanding of the fundamental mechanisms of biological memory but also provides a powerful theoretical blueprint for engineering more sophisticated, intelligent, and human-like artificial agents. As scientists continue to unravel the mysteries of the mind, this research from Skoltech stands as a testament to the transformative power of interdisciplinary inquiry, promising to reshape our technological landscape and perhaps even our very perception of what it means to sense and remember.