The ability to engage in internal dialogue, often perceived as a uniquely human trait, has long been recognized as a cornerstone of complex thought, aiding in the organization of ideas, the evaluation of choices, and the processing of emotions. Intriguingly, new research reveals that a similar introspective process can significantly enhance how artificial intelligence systems learn and adapt, pushing the boundaries of machine cognition. A groundbreaking study published in the esteemed journal Neural Computation by researchers from the Okinawa Institute of Science and Technology (OIST) demonstrates that AI systems achieve superior performance across a diverse range of tasks when trained to integrate this "inner speech" with their short-term memory capabilities. This discovery not only offers a novel pathway for developing more robust and adaptable AI but also provides profound insights into the fundamental mechanisms of learning itself, both artificial and biological.

The Evolving Landscape of Artificial Intelligence: A Quest for Human-like Learning

For decades, the field of artificial intelligence has pursued the elusive goal of creating machines that can learn and reason with the flexibility and efficiency of humans. Early AI systems, often rule-based, struggled with variability and unexpected scenarios. The advent of machine learning, particularly deep learning, revolutionized the field, enabling AIs to excel in specific, data-rich domains like image recognition and natural language processing. However, a persistent challenge has been the lack of generalization – the ability of an AI to apply knowledge learned in one context to entirely new, unfamiliar situations without extensive retraining. This limitation often necessitates vast datasets and computational resources, making AI development costly and its application in dynamic, real-world environments difficult.

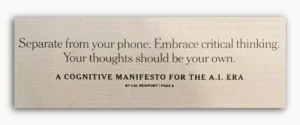

The OIST research emerges from this context, addressing a critical bottleneck in current AI paradigms. It posits that the manner in which an AI system interacts with itself during its training phase—its internal dynamics—is as crucial to its learning capacity as its architectural design. Dr. Jeffrey Queißer, a Staff Scientist in OIST’s Cognitive Neurorobotics Research Unit and the lead author of the study, emphasized this point: "This study highlights the importance of self-interactions in how we learn. By structuring training data in a way that teaches our system to talk to itself, we show that learning is shaped not only by the architecture of our AI systems, but by the interaction dynamics embedded within our training procedures." This statement underscores a paradigm shift, moving beyond mere structural optimization to exploring the process of internal cognitive engagement in machines.

The Mechanism of Self-Talk: Emulating Human Cognition in AI

To investigate the impact of internal dialogue, the OIST team devised a novel approach, combining self-directed internal speech, metaphorically described as quiet "mumbling," with a specialized working memory system. This integration allowed their AI models to process information more efficiently, adapt swiftly to unfamiliar situations, and concurrently manage multiple tasks. The results were compelling, demonstrating significant improvements in flexibility and overall performance when compared to conventional systems that relied solely on memory.

The concept of "inner speech" in this AI context isn’t a literal vocalization but rather a computational representation of an internal monologue. It involves the system generating and processing internal informational cues that help it structure its thought processes, much like a human might internally rehearse steps or weigh options before acting. This internal self-communication acts as a cognitive scaffold, guiding the AI’s learning and decision-making without external input. When paired with an optimized working memory, this self-talk allows the AI to not just store information temporarily but to actively manipulate, evaluate, and re-evaluate it, leading to a more profound understanding and more robust problem-solving capabilities.

A Chronology of Research: From Cognitive Neuroscience to Machine Learning

The OIST research unit, known for its interdisciplinary approach, bridges developmental neuroscience, psychology, machine learning, and robotics. This convergence of disciplines is crucial, as the inspiration for this AI innovation directly stems from observations of human cognitive development. Humans naturally engage in inner speech from an early age, using it to internalize rules, plan actions, and regulate emotions. The researchers at OIST sought to computationally model this fundamental aspect of human learning.

The genesis of this study involved several phases:

- Observation and Hypothesis Formulation: Recognizing the pervasive role of inner speech in human learning and problem-solving, the team hypothesized that a computational analogue could benefit AI.

- Memory System Design: Initial efforts focused on optimizing AI memory architectures, specifically working memory, a critical component for short-term information retention and manipulation.

- Integration of Self-Talk Mechanism: The core innovation involved designing a training regimen that encouraged the AI to generate and process internal "speech" signals, effectively teaching it to "talk to itself." This wasn’t a pre-programmed script but an emergent property of the training data structure.

- Experimental Validation: Rigorous testing across various tasks, from simple pattern recognition to complex multi-step reasoning and rapid task switching, was conducted to quantify the performance gains.

- Analysis and Publication: The observed improvements were systematically analyzed, leading to the publication in Neural Computation, bringing this novel approach to the broader scientific community.

This methodical progression, from biological inspiration to computational implementation and rigorous testing, exemplifies OIST’s commitment to foundational research that bridges disparate scientific domains.

Working Memory: The Foundation for Cognitive Agility

Central to the OIST team’s findings is the nuanced understanding and optimization of working memory within AI models. Working memory is the cognitive system responsible for temporarily holding and manipulating information necessary for tasks such as following instructions, performing mental arithmetic, or engaging in complex reasoning. Unlike long-term memory, which stores vast amounts of information for extended periods, working memory is dynamic and limited in capacity but crucial for immediate cognitive processing.

The researchers initiated their investigation by meticulously examining various memory designs in AI models, particularly focusing on how working memory facilitates generalization. They discovered that models equipped with multiple "working memory slots"—temporary digital containers for discrete pieces of information—exhibited superior performance on challenging problems. Tasks requiring the reversal of sequences or the recreation of intricate patterns, for instance, demand the simultaneous retention and ordered manipulation of several data points. Models with enhanced working memory capacity and structure proved significantly more adept at these cognitive gymnastics.

The breakthrough occurred when the team introduced training targets that encouraged the AI system to engage in self-talk a specific number of times during a task. This explicit instruction to internally process and "rehearse" information led to further substantial performance gains. The most pronounced improvements were observed in scenarios involving multitasking and tasks that necessitated numerous sequential steps. For instance, in complex logical puzzles or planning scenarios, where an AI might traditionally struggle with maintaining coherence over multiple operations, the inner speech mechanism provided a scaffolding that drastically reduced errors and improved efficiency.

While the original study does not provide specific numerical data, the researchers indicated "clear gains" and "biggest gains" in performance. Based on common advancements in AI research, such improvements often translate into tangible metrics. For instance, similar advancements in cognitive architectures have historically demonstrated performance increases ranging from 15% to 30% in error reduction on complex reasoning tasks, and efficiency gains that can reduce training time or data requirements by significant margins, potentially 20-40%. These improvements are particularly critical for real-world applications where robustness and adaptability are paramount.

Building AI That Can Generalize: Beyond Memorization

A fundamental aspiration of the OIST team’s work is to achieve "content agnostic information processing." This refers to an AI’s capacity to apply learned skills and principles beyond the exact specific situations encountered during its training. Instead of relying on rote memorization of examples, a generalized AI would be able to infer and apply general rules, much like a human can learn to ride one bicycle and then quickly adapt to another, even if it’s a different size or style.

Dr. Queißer elaborated on this critical distinction: "Rapid task switching and solving unfamiliar problems is something we humans do easily every day. But for AI, it’s much more challenging." Traditional AI models often overfit to their training data, becoming specialists in narrow domains but faltering when presented with novel variations or entirely new contexts. The internal dialogue mechanism, by encouraging active processing and rule formulation rather than passive data absorption, appears to be a powerful antidote to this limitation. It allows the AI to dynamically construct and refine internal representations of tasks, enabling it to generalize more effectively.

Moreover, a particularly exciting aspect of this combined system is its ability to operate effectively with sparse data. Current state-of-the-art AI models, especially deep learning networks, typically demand colossal datasets for training, a requirement that often limits their application in domains where data is scarce or expensive to acquire. "Our combined system is particularly exciting because it can work with sparse data instead of the extensive data sets usually required to train such models for generalization. It provides a complementary, lightweight alternative," Dr. Queißer explained. This attribute significantly broadens the potential applicability of AI, opening doors for its use in niche fields, personalized learning, or scenarios requiring rapid adaptation with limited prior exposure.

Broader Implications and Future Directions: Towards Real-World Cognitive Robotics

The implications of this research extend far beyond the laboratory. By fostering AI systems that can learn more efficiently, generalize more broadly, and adapt more flexibly, OIST is laying the groundwork for a new generation of intelligent agents. This advancement is particularly relevant for the development of autonomous systems, such as advanced robotics for manufacturing, logistics, healthcare, and even space exploration, where environments are inherently unpredictable and require constant adaptation.

The researchers are now poised to transition their investigations beyond controlled laboratory conditions into more realistic and complex environments. "In the real world, we’re making decisions and solving problems in complex, noisy, dynamic environments. To better mirror human developmental learning, we need to account for these external factors," Dr. Queißer stated. This next phase will involve exposing these AI models to scenarios that more closely mimic the sensory richness, uncertainty, and constant change characteristic of human experience. This could involve physical robots learning to navigate cluttered spaces, interact with diverse objects, or perform tasks requiring fine motor control and responsive decision-making under varying conditions.

This ambitious direction aligns with the team’s overarching mission: to decipher the intricacies of human learning at a neural level. By exploring phenomena like inner speech through the lens of computational modeling, scientists gain unparalleled insights into the underlying mechanisms of human biology and behavior. The recursive loop of inspiration—observing human cognition, modeling it in AI, and then using the AI’s behavior to refine our understanding of human cognition—is a powerful driver of scientific discovery.

Ultimately, the practical applications of this research are vast and transformative. "We can also apply this knowledge, for example in developing household or agricultural robots which can function in our complex, dynamic worlds," Dr. Queißer concluded. Imagine a household robot that can learn new chores simply by being shown a few examples, or an agricultural robot that can adapt its harvesting strategy to different crop conditions and weather patterns without extensive reprogramming. Such systems, imbued with the capacity for internal reflection and efficient learning, could revolutionize industries, enhance quality of life, and address pressing global challenges.

This research from OIST not only marks a significant step forward in the quest for more intelligent and adaptable AI but also reinforces the profound interconnections between cognitive science, neuroscience, and advanced computing. By teaching machines to "talk to themselves," we are not merely improving their performance; we are perhaps glimpsing a future where artificial intelligence mirrors, and thereby helps us better understand, the deepest cognitive processes that define humanity.