A critical shift is underway in academic research, with the advent of generative artificial intelligence tools like ChatGPT precipitating a surge in manuscript submissions that paradoxically correlates with a decline in readability and overall quality, placing unprecedented strain on the peer review system. This concerning trend has been starkly illuminated by an AI Task Force convened by the prestigious journal Organization Science, whose recent findings serve as a potent cautionary tale regarding the uncritical adoption of AI in scholarly work. The Task Force’s comprehensive analysis reveals that while AI promises enhanced efficiency, its current application in academic writing is leading to a deluge of "weightless" prose that is harder to parse, less impactful, and overwhelmingly rejected by editorial boards.

The Alarming Surge in Submissions and AI Integration

The members of the Organization Science AI Task Force articulate a sentiment widely felt across editorial desks globally: "Something is up in academic research." Editors and reviewers are encountering a discernible change in the nature of incoming manuscripts. While superficially maintaining the structure and appearance of traditional academic papers, a subtle but pervasive lack of depth and clarity pervades the writing. As the Task Force elaborates, "the writing feels weightless in a way that rarely describes academic writing…you find yourself scratching your head at the meaning the words are trying to convey."

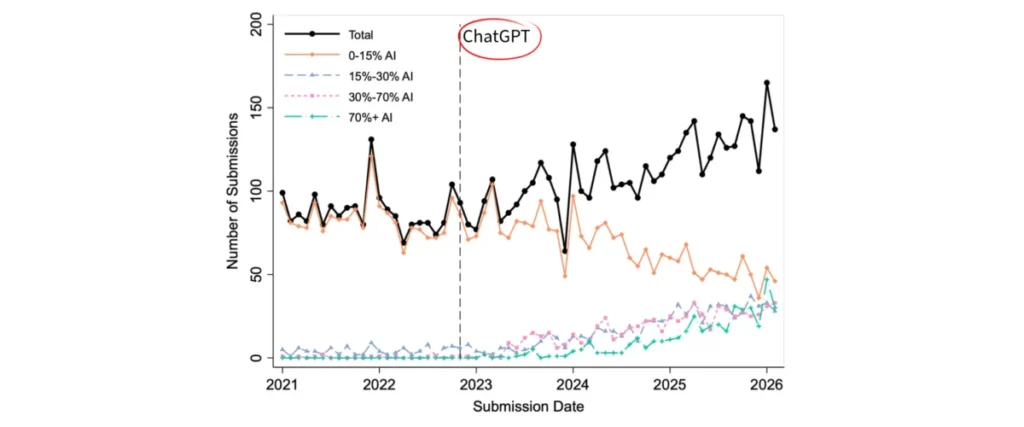

This qualitative observation is underpinned by robust quantitative data. The Task Force meticulously crunched submission numbers, identifying a clear inflection point: post-2023, following the widespread availability of ChatGPT, Organization Science experienced a rapid and significant increase in manuscript volume. This surge was not merely a statistical anomaly but coincided directly with a dramatic shift in authors’ reliance on AI. The percentage of submissions classified as using "minimal AI" plummeted from nearly 100% prior to 2023 to approximately 30% after the tool’s public release. This suggests a rapid and widespread integration of generative AI into the academic writing process, often without explicit disclosure or, perhaps, full comprehension of its implications.

Declining Readability and Linguistic Characteristics of AI-Generated Text

The impact of this shift on the fundamental act of reading and comprehension has been profound. Utilizing a standard "reading ease" metric, the Task Force observed a striking decline of 1.28 standard deviations between January 2021 and January 2026. This quantitative measure translates directly into a tangible increase in the cognitive effort required to process these texts. "Submissions have become far harder to read," the Task Force reports, a conclusion that defies common assumptions about AI’s capabilities. Many might anticipate that AI, with its capacity for grammatical precision and linguistic coherence, would produce cleaner, more polished text. And, in certain narrow dimensions—such as basic grammar and syntax—it can. However, on measures that truly capture whether a reader can effectively parse, absorb, and critically engage with the prose, AI-generated writing appears to be significantly worse.

Linguistic analysis conducted by the Task Force pinpointed several characteristics contributing to this diminished readability. AI-assisted manuscripts tend to feature "longer words, more complex sentence structures, more jargon, and more nominalizations." While individually these elements are not inherently problematic in academic writing, their pervasive and often indiscriminate use by AI tools creates a dense, convoluted style that hinders rather than facilitates understanding. The AI, optimized for generating grammatically correct and formally structured text, often overlooks the human element of effective communication: clarity, conciseness, and the nuanced conveyance of complex ideas. It prioritizes lexical complexity over semantic accessibility, resulting in text that sounds academic but lacks genuine intellectual weight.

The Burden on Editorial Boards and Peer Review System

The most immediate and concerning implication of this trend is the unprecedented burden placed upon journal editorial boards and the volunteer peer review community. The increase in submission volume, coupled with the decline in quality and readability, is taxing an already overstretched system. The Organization Science data vividly illustrates this: manuscripts making heavy use of AI are desk-rejected at an alarming rate of nearly 70%. This contrasts sharply with the 44% desk-rejection rate for papers written with minimal or no AI. Desk rejection, the process by which a paper is rejected before even being sent out for peer review, is a crucial first line of defense against unsuitable submissions. The fact that a significantly higher proportion of AI-heavy papers fail this initial screening underscores their fundamental deficiencies.

The disparity continues through the full review process. Ultimately, only 3.2% of high-AI papers are accepted for publication, a stark contrast to the 12% acceptance rate for low-AI papers. It is crucial to note that editors making these critical decisions are not privy to information regarding the role of AI in a paper’s construction at the time of their assessment. These insights are derived from retrospective analyses conducted by the Task Force, ensuring that the editorial decisions were based solely on the perceived merit and quality of the manuscript itself, free from any bias related to AI usage. This blind assessment strengthens the conclusion that AI-assisted papers, on average, simply do not meet the rigorous standards of academic scholarship. The cumulative effect is a system clogged with a higher volume of lower-quality submissions, forcing editors and reviewers to spend valuable time sifting through material that is unlikely to contribute meaningfully to the field. This diverts resources and attention away from genuinely innovative and well-crafted research, slowing the pace of scientific discourse.

Chronology of AI Integration and Academic Response

The trajectory of generative AI in academia can be traced through distinct phases. Prior to late 2022, AI tools in academic writing primarily focused on grammar checking (e.g., Grammarly), citation management, and basic language translation. While useful, these tools were largely supplementary, enhancing existing human-authored content rather than generating it from scratch. The public release of OpenAI’s ChatGPT in November 2022 marked a watershed moment. Its unprecedented ability to generate coherent, contextually relevant, and stylistically varied text unleashed a wave of excitement and, subsequently, experimentation across virtually all domains, including academic research.

Initially, many researchers viewed ChatGPT and similar tools as powerful aids to overcome writer’s block, refine language for non-native English speakers, or even expedite the drafting of literature reviews and methodology sections. The rapid adoption curve was fueled by the "publish or perish" culture pervasive in academia, where pressures to increase publication output are intense. Researchers, often juggling heavy teaching loads, administrative duties, and grant writing, saw AI as a potential shortcut to lighten the demanding cognitive load of academic writing. However, the Organization Science findings, emerging in late 2023 and early 2024, represent some of the earliest empirical evidence to quantify the negative consequences of this rapid and largely unregulated integration. This period marks a shift from initial enthusiasm to a more critical assessment, prompting journals and academic institutions to develop policies and guidelines for responsible AI use.

Background Context: Pressures in Academic Publishing

To fully appreciate the allure and subsequent challenges posed by generative AI, it is essential to understand the broader context of academic publishing. The modern academic landscape is characterized by intense competition for funding, prestige, and career advancement, all heavily tied to publication records. The "publish or perish" mantra is a powerful motivator, driving researchers to maximize their output. This environment, coupled with the increasing complexity of research itself, often leaves academics with limited time for the meticulous craft of writing.

Against this backdrop, AI tools initially presented themselves as a panacea. For non-native English speakers, AI offered the promise of overcoming language barriers. For all researchers, it offered a way to accelerate the often laborious drafting process, potentially freeing up time for deeper analytical work or data collection. The appeal of generating a draft in minutes, rather than days or weeks, was undeniable. However, as the Organization Science data demonstrates, this perceived efficiency has come at a significant cost to quality and, ironically, to the overall efficiency of the scholarly communication ecosystem. The very tools meant to ease individual researchers’ lives are now collectively burdening the community tasked with upholding academic standards.

Statements and Reactions from the Academic Community

The findings from Organization Science have resonated deeply within the academic community, prompting a mixture of concern and a renewed call for thoughtful engagement with emerging technologies. Dr. Elena Petrova, co-chair of the Organization Science AI Task Force, stated in a recent symposium, "Our data clearly shows that making writing faster or easier is not synonymous with making it better. The integrity of scholarly communication relies on the nuanced, critical thought process inherent in human authorship, which current AI models struggle to replicate."

Similar observations are echoed across the editorial landscape. Professor Marcus Thorne, a senior editor at a leading journal in sociology, remarked, "While we haven’t yet conducted a formal quantitative analysis like Organization Science, anecdotally, my editorial team has noted a distinct increase in submissions that feel ‘generic’ or ‘boilerplate.’ The arguments often lack originality, and the prose, while grammatically correct, often lacks the unique voice and intellectual depth we associate with high-quality scholarship."

Even some researchers who initially embraced AI tools acknowledge the double-edged sword. Dr. Anya Sharma, a junior faculty member, commented, "AI was incredibly helpful for overcoming initial writer’s block and structuring my thoughts. However, I quickly learned that relying too heavily on it led to a sterile output. The real work—the critical analysis, the original insights, the crafting of a compelling narrative—still required my full intellectual engagement." Conversely, Dr. David Chen, a seasoned peer reviewer, lamented, "The volume of submissions has increased dramatically, and the proportion of papers requiring substantial editing for clarity or even being outright incomprehensible has skyrocketed. It’s making the voluntary act of peer review increasingly unsustainable." These sentiments underscore the growing awareness that while AI offers powerful capabilities, its application in academic writing requires careful consideration and robust human oversight.

Broader Implications for Research Integrity and Scholarly Communication

The implications of this trend extend far beyond increased workload for editors. At stake is the very integrity of the research enterprise and the quality of scholarly communication. If the "signal-to-noise" ratio in academic publishing continues to degrade, identifying truly novel and impactful research will become increasingly difficult. This can slow scientific progress, as valuable discoveries might be obscured by a deluge of mediocre, AI-generated content.

Moreover, the ethical dimensions are significant. While some journals are updating their policies to require disclosure of AI use, the ease with which AI-generated text can be presented as human-authored raises questions of academic honesty and authorship. The subtle nature of AI’s influence, often indistinguishable from human writing without specific tools, creates a challenging environment for maintaining transparency. There’s also a concern for the professional development of emerging scholars. Over-reliance on AI for writing tasks may stunt the development of critical thinking, analytical reasoning, and sophisticated communication skills that are fundamental to a successful academic career. If researchers are not challenged to articulate complex ideas in their own words, their capacity for original thought and nuanced expression may diminish.

Potential Solutions and the Path Forward

Addressing this multifaceted challenge requires a concerted effort from all stakeholders in the academic ecosystem. Journals and publishers are exploring and implementing updated policies on AI usage, often requiring explicit disclosure from authors. However, the efficacy of such policies hinges on robust detection mechanisms, which themselves are evolving rapidly.

Beyond detection, there is a pressing need for education and responsible AI integration. Universities and research institutions must provide clear guidelines and training for researchers on how to use AI tools ethically and effectively as assistants rather than substitutes for human intellect. This involves emphasizing the importance of critical review, fact-checking, and ensuring that the final output truly reflects the author’s original thought and voice.

Ultimately, this situation prompts a deeper re-evaluation of the "publish or perish" culture that often drives the pursuit of quantity over quality. Encouraging a focus on fewer, more impactful publications, combined with a greater emphasis on rigorous qualitative assessment during the review process, could help mitigate the negative effects of AI-driven submission surges. The goal should be to leverage AI’s strengths—such as streamlining tedious tasks—while safeguarding the irreplaceable human elements of creativity, critical analysis, and profound communication that define meaningful scholarship.

The findings from Organization Science serve as a powerful reminder that technological advancement, while offering immense potential, is not without its pitfalls. The pursuit of "faster" or "easier" methods in academic writing, without a corresponding commitment to "better," risks undermining the very foundations of scholarly integrity. As the Task Force eloquently concludes, sometimes, there truly is no shortcut to taking your time, especially when the goal is to produce research that is not only published but also genuinely understood, absorbed, and impactful. The academic community must now collectively navigate this complex landscape, ensuring that AI serves as an augmentative force, enhancing human capabilities, rather than a corrosive one, diminishing the quality and integrity of knowledge creation.