As artificial intelligence rapidly permeates various sectors, its accessibility has dramatically increased, shifting from a niche domain for specialists to a more generalized tool. This democratization of AI, particularly through self-service analytics, presents both immense opportunities and significant challenges for institutions, particularly in higher education. The imperative now is to move beyond mere experimentation with AI to its strategic, real-world execution, a transition that hinges critically on the establishment of centralized, rigorously governed data sources. Cody Irwin, Domo’s AI adoption director, underscores this urgent need, providing insights into the strategies necessary for enterprise teams to forge a robust data foundation capable of supporting sustainable AI integration.

The journey towards effective AI adoption begins with confronting long-standing data management issues, which are now amplified by the advent of generative AI. For years, organizations have grappled with the "perils of siloed data," a fragmentation that obstructs holistic visibility and informed decision-making. However, the rise of AI transforms this chronic challenge into an immediate crisis. Irwin articulates this shift, stating that "data access and governance hold some of the biggest hurdles to unlocking the promised efficiencies behind generative AI." The traditional wisdom of building data warehouses or lakes for analytics and decision-making, once deemed critical, has now become an undeniable imperative. The barrier to interacting with complex data has fundamentally changed; no longer does one require proficiency in SQL, data science, or visualization techniques. Instead, the entry point is simplified to "Do you know words?" This profound change necessitates that data leaders within enterprises prioritize the creation of "centralized sources of governed truth" to truly empower their organizations.

The Evolution of Data Management: From Silos to AI-Ready Architectures

The history of data management has been a continuous battle against fragmentation. In the early days, departmental databases operated in isolation, leading to inconsistent reporting and redundant efforts. The rise of enterprise resource planning (ERP) systems in the 1990s sought to integrate operational data, but often created new silos within these monolithic platforms. The early 2000s saw the push for data warehouses, designed to consolidate and structure data for business intelligence (BI) reporting. This was followed by data lakes in the 2010s, which offered more flexibility for storing vast amounts of raw, unstructured data, accommodating the burgeoning field of big data analytics. Yet, even with these advancements, many organizations struggled to fully break down data barriers, often due to technological complexities, organizational inertia, or a lack of clear governance frameworks.

Today, generative AI represents another paradigm shift, demanding an even higher level of data integration, quality, and contextual understanding. Unlike traditional analytics, which often relied on structured queries against predefined data models, generative AI thrives on understanding nuances, relationships, and context across diverse datasets. If the underlying data is fragmented, inconsistent, or poorly governed, the AI’s output will inevitably reflect these deficiencies, leading to inaccurate insights, biased decisions, and a significant erosion of trust. Industry reports consistently highlight data quality and governance as top concerns for AI implementers. A 2023 survey by McKinsey & Company, for instance, found that data quality was cited by over 60% of organizations as a significant barrier to scaling AI initiatives, reinforcing Irwin’s assertion about the immediate imperative.

Addressing the Trust Deficit in Higher Education

The challenge of data governance and trust is particularly pronounced within the higher education sector. Institutions like universities and colleges operate on a vast and intricate web of data, managing everything from student admissions, financial aid disbursements, and academic records to complex research data, publication metrics, accreditation compliance, fundraising campaigns, and daily operational logistics. The accuracy and integrity of this data are paramount, as any "misstatement of data is often public and can have serious ramifications on institutional credibility," as Irwin notes. A misreported graduation rate, an error in financial aid distribution, or an inaccuracy in research findings can not only lead to financial penalties but also severely damage an institution’s reputation, affecting student enrollment, donor confidence, and faculty recruitment.

This inherent sensitivity, coupled with the increasing move towards self-service analytics and AI tools, exacerbates what Irwin describes as a "trust deficit." While self-service empowers faculty, administrators, and even students to access and analyze data directly, it simultaneously places a greater burden of responsibility on data leaders. They must ensure that the data being accessed is not only accurate but also certified, properly contextualized, and governed according to institutional policies and ethical guidelines. The stakes are incredibly high; imagine an AI-powered chatbot providing incorrect information about degree requirements, or a generative AI tool producing biased insights about student performance due to flawed underlying data. The academic community, known for its rigorous pursuit of truth, demands the highest standards of data integrity. Overcoming this trust deficit requires transparent data lineage, robust audit trails, and clear certification processes for all data exposed for self-service and AI consumption.

Designing for AI: The Medallion Architecture and Semantic Layer

To meet the stringent demands of AI and ensure reliable data, institutions must adopt advanced data modeling strategies. Irwin highlights the value of data modeling that explicitly considers AI and flexibility. Specifically, he recommends adopting a "medallion architecture." This architectural pattern, common in modern data warehousing and lakehouse environments, categorizes data into distinct layers, typically "Bronze," "Silver," and "Gold."

- Bronze Layer: This is the raw, untransformed data ingested directly from source systems. It’s an immutable record, often chaotic and inconsistent, but serves as the single source of truth for all subsequent transformations.

- Silver Layer: Data in this layer undergoes initial cleansing, standardization, and enrichment. Basic transformations are applied, and data quality issues are addressed. It’s a structured and consistent view of the raw data.

- Gold Layer: This is the highly refined, aggregated, and modeled data specifically designed for consumption by business users, analytics applications, and, crucially, AI models. These "gold datasets" are optimized for performance, usability, and interpretability. They represent the "governed truth" that decision-makers and AI can confidently leverage.

The concept of "gold datasets" is vital because they offer a curated, trustworthy, and performant view of institutional data, shielded from the complexities and inconsistencies of raw source systems. This ensures that AI models are trained and operate on high-quality, relevant data, reducing the likelihood of errors or misleading outputs.

Beyond structured data, AI thrives on context. As Irwin points out, merely making data available is insufficient; "the data models should surface semantics that provide organizational context that AI can leverage to provide more meaningful and accurate responses." A semantic layer enriches data with meaning, defining relationships, business rules, and hierarchies that are otherwise opaque to AI. For example, in a university setting, a semantic layer could define that "student ID" is a unique identifier, "course code" relates to a specific "department," and "GPA" is calculated based on "grade points" and "credits earned" for "enrolled courses." Without this semantic understanding, an AI might struggle to correctly interpret queries like "What is the average GPA for computer science majors enrolled in advanced calculus courses this semester?" With a robust semantic layer, the AI can accurately navigate the data, understand the relationships between students, courses, departments, and academic performance, and deliver precise answers. This rich contextualization is what transforms raw data into intelligent information, unlocking the full potential of AI.

Effective Collaboration with Data Productivity Platforms

Implementing these sophisticated data architectures and semantic layers requires robust tools and platforms. Data designers play a pivotal role, and their first step, according to Irwin, is to make data "centrally available through a governed interface." This centralization is not about restriction but empowerment. It involves creating a unified data fabric that can retrieve or integrate data from virtually any source environment, whether it’s a legacy student information system, a modern cloud-based CRM, research databases, or administrative applications.

This centralized data fabric then becomes the bedrock for implementing critical governance functions: policy enforcement, security protocols, comprehensive logging, and data certification. Policy enforcement ensures compliance with institutional regulations and external mandates (like FERPA in the US for student data). Security protocols protect sensitive information from unauthorized access. Logging provides an auditable trail of data access and usage, crucial for compliance and troubleshooting. Certification marks datasets as verified and trustworthy, guiding users towards reliable information.

The critical balance, Irwin emphasizes, is that this centralization must be empowering, not restricting. "If it’s not easy, people tend to find a way around it." This speaks to the human element of data adoption. If accessing governed data is cumbersome, users will revert to shadow IT solutions, downloading data to spreadsheets, or creating their own unofficial databases, reintroducing the very silos the institution is trying to eliminate. Therefore, data productivity platforms must offer intuitive, controlled interfaces that enable self-service analytics and easy AI interactions. Domo, Irwin’s company, is cited as an example of such a platform, providing these controlled interfaces atop a centralized data fabric. Such platforms streamline the process of data integration, transformation, governance, and delivery, making it feasible for diverse users to interact with certified data securely and efficiently.

Leadership in a Shifting AI Culture: Prioritizing Progress Over Perfection

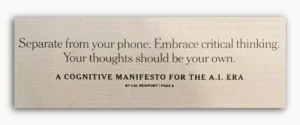

The rapid evolution of AI demands a new kind of leadership from data designers and IT professionals. In this environment of massive data needs and shifting cultural norms around technology, establishing a strong "data foundation is critical." The faster designers can establish this foundation, the more quickly internal stakeholders—faculty, researchers, administrators, and students—will feel empowered to leverage data and AI effectively.

A key piece of advice from Irwin is to "not let perfection be the enemy of progress." This mantra is particularly relevant in the fast-paced AI landscape. While meticulous planning and robust architecture are essential, getting bogged down in achieving an immaculate, all-encompassing solution before any value is delivered can lead to paralysis. Instead, design leaders should prioritize what they believe will be "most impactful" and move quickly to release those foundational elements. This agile approach allows institutions to demonstrate early wins, build momentum, gather feedback, and iterate on their data foundation.

For instance, a university might start by centralizing and certifying student enrollment data for an AI-powered enrollment prediction model, even while other datasets (like research grants or alumni donations) are still in various stages of integration. The immediate impact of improved enrollment forecasting can validate the effort and secure further investment and buy-in for subsequent phases. This iterative strategy is crucial for adapting to the dynamic nature of AI, where new models, tools, and best practices emerge constantly. Waiting for a perfect, static solution in such a fluid environment is a recipe for being left behind.

Broader Implications and the Future Landscape

The insights provided by Cody Irwin highlight a critical juncture for higher education institutions. The shift to AI is not merely a technological upgrade but a fundamental transformation of how institutions operate, make decisions, and interact with their stakeholders. The emphasis on centralized, governed data is not just about efficiency; it is about maintaining credibility, fostering innovation, and ensuring ethical AI deployment.

The implications extend across the entire institutional ecosystem:

- For Students: Access to AI-powered personalized learning tools, improved administrative services, and more accurate career guidance, all predicated on trustworthy data.

- For Faculty and Researchers: Enhanced capabilities for data analysis, automated literature reviews, and accelerated research processes, requiring access to reliable and context-rich datasets.

- For Administrators: Better-informed strategic planning, optimized resource allocation, and more efficient operational management, supported by real-time, governed insights.

- For Data Professionals: A shift from reactive data management to proactive data stewardship, focusing on architectural design, semantic enrichment, and continuous governance.

The challenge is significant, but the opportunity to redefine the role of data in higher education is even greater. As AI continues its inexorable march into every facet of society, institutions that successfully build these centralized sources of governed truth will not only unlock unprecedented efficiencies but also solidify their position as leaders in an increasingly data-driven world. This requires not just technological investment, but a cultural shift towards data literacy, collaboration, and a collective commitment to data integrity and ethical AI practices. The time for action is now; the imperative is clear.