Educational leaders worldwide are identifying significant opportunities to enhance productivity, alleviate administrative burdens, and facilitate enriched learning experiences through the integration of generative artificial intelligence. However, as institutions move toward large-scale deployment of tools like Microsoft 365 Copilot and Microsoft 365 Copilot Chat, a persistent tension has emerged: the demand for IT teams to accelerate innovation while maintaining absolute data integrity and student privacy. The central challenge facing modern academia is no longer the decision of whether to adopt AI, but rather the establishment of a framework that allows for responsible, scalable growth. In response to this need, the Zero Trust security model has emerged as the primary architectural standard for protecting educational environments in the age of generative intelligence.

The transition toward AI-integrated campuses requires a departure from traditional perimeter-based security. For decades, institutional IT relied on the "castle-and-moat" strategy, assuming that everything inside the network was safe. The rise of AI necessitates a more granular approach because these tools interact with data in fundamentally new ways, surfacing information across disparate systems and content sources with unprecedented speed. To address this, organizations are increasingly turning to Zero Trust workshops and structured assessments to evaluate their current security posture, creating a strategic roadmap that aligns technological advancement with rigorous compliance and safety standards.

The Evolution of Cybersecurity in Academic Environments

The shift toward Zero Trust in education is not an isolated event but the culmination of a decade-long evolution in cybersecurity strategy. The term "Zero Trust" was originally coined by industry analysts to describe a model where "trust" is never assumed and must be verified at every step. In the context of education, this evolution was accelerated by the COVID-19 pandemic, which forced schools into remote and hybrid models, effectively dissolving the physical network perimeter.

In the current landscape, the introduction of Generative AI (GenAI) represents the next major milestone in this chronology. Unlike standard software, AI tools act on a user’s behalf, synthesizing data from various permissions levels. This capability means that existing misconfigurations or overly broad access rights—which might have remained dormant in a traditional folder-based system—can suddenly become major liabilities. According to industry reports from cybersecurity firms such as Check Point Research, the education and research sector has consistently been one of the most targeted industries for cyberattacks, experiencing a significantly higher volume of weekly attacks compared to other sectors. This heightened threat landscape makes the "Assume Breach" mentality of Zero Trust a necessity rather than an option.

Implementing the Three Pillars of Zero Trust for AI

To successfully scale AI, institutions must apply three core principles of Zero Trust: verify explicitly, use least privilege access, and assume breach. These principles provide a framework for IT leaders to extend their existing security investments into the AI era.

Pillar I: Explicit Verification of Identity

The first line of defense is identity. Before any AI tool can be deployed, the institution must have total visibility into who is accessing the system and the conditions under which that access is granted. This involves more than just a username and password; it requires a context-aware analysis of the user’s location, device health, and service or workload requirements.

At Singapore Management University (SMU), this principle has been put into practice through an integrated architecture using Microsoft Entra ID. By continuously verifying identities and monitoring devices, SMU has been able to expand AI use beyond the cybersecurity department. This foundation allowed the university to streamline administrative processes and develop personalized learning paths for students, ensuring that the AI only interacts with users who have been rigorously authenticated and authorized.

Pillar II: Enforcing Least Privilege Access

One of the most critical risks in AI adoption is "over-permissioning." If a user has access to sensitive HR files or student records they do not need for their daily tasks, a generative AI tool could inadvertently surface that information during a query. The principle of least privilege access ensures that AI tools only access the specific data points required for a given task.

For tools like Microsoft 365 Copilot, the AI’s responses are grounded in the content the user is already authorized to see. However, for web-grounded tools like Copilot Chat, the focus shifts toward managing which data sources and agents IT enables. Fulton County Schools in Georgia recently implemented such safeguards to protect student information while reducing the administrative load on educators. By prioritizing a structured environment, the district ensured that educators could focus on student engagement without compromising the privacy of the individuals they serve.

Pillar III: Resilience Through the "Assume Breach" Mentality

The final pillar of the Zero Trust model is the proactive assumption that a breach may occur or has already occurred. In an AI-driven environment, the stakes are higher because a single compromised account could potentially allow an attacker to use AI to scan and summarize vast amounts of sensitive institutional data.

By assuming breach, IT teams focus on minimizing the "blast radius"—the extent of the damage an attacker can do once they have gained entry. This involves segmenting networks, encrypting end-to-end data, and using real-time analytics to detect anomalies. This principle ensures that even if a security failure occurs, the institution has the resilience to respond quickly and limit the exposure of academic and personal records.

Supporting Data and Economic Implications

The push for Zero Trust is supported by significant economic and operational data. According to IBM’s "Cost of a Data Breach Report," organizations that have fully deployed a Zero Trust security architecture save an average of $1.76 million per breach compared to those that have not. In the education sector, where budgets are often constrained and public funding is under scrutiny, these savings are critical.

Furthermore, the adoption of Microsoft 365 Education A3 and A5 plans indicates a growing trend toward integrated security suites. These plans allow institutions to extend their existing governance and data protection practices to AI without the need to "rip and replace" their current infrastructure. By leveraging existing investments in identity management and compliance, schools can achieve a faster return on investment for their AI initiatives.

Official Responses and Institutional Perspectives

Responses from the academic community suggest a cautious but optimistic approach to AI. Administrators at leading institutions have noted that the primary hurdle to AI adoption is not the technology itself, but the "trust gap." As one IT Director from a major research university noted, "Our faculty and students are eager to use these tools to accelerate research, but without the assurance that their intellectual property and personal data are shielded, adoption will remain fragmented and risky."

Industry experts emphasize that Zero Trust workshops are becoming a vital tool for bridging this gap. These workshops provide a structured assessment of an institution’s security posture, allowing IT teams to identify vulnerabilities before they are exploited by bad actors or inadvertently exposed by AI systems. The roadmap generated from these sessions helps align the goals of the academic department with the security requirements of the IT department.

Broader Impact and Future Outlook

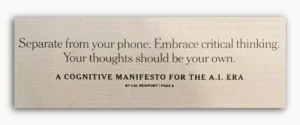

The implications of securing AI through Zero Trust extend beyond the immediate protection of data. It creates a "culture of security" that is essential for the future of digital literacy. As students and faculty interact with AI systems that are governed by strict ethical and security standards, they learn the importance of data privacy and responsible technology use.

Looking ahead, the integration of AI in education will likely move toward more specialized agents—AI tools designed for specific subjects or administrative tasks. The Zero Trust framework is uniquely suited for this modular future, as it allows IT teams to grant specific permissions to specific agents for specific periods.

In conclusion, the path to scaling AI in education is paved with the principles of Zero Trust. By moving away from outdated security models and embracing a strategy of continuous verification and limited access, academic institutions can unlock the full potential of generative AI. This approach does not slow down innovation; rather, it provides the necessary guardrails that allow innovation to flourish in a safe, compliant, and sustainable manner. The success of AI in the classroom will ultimately depend on the strength of the security foundation upon which it is built.