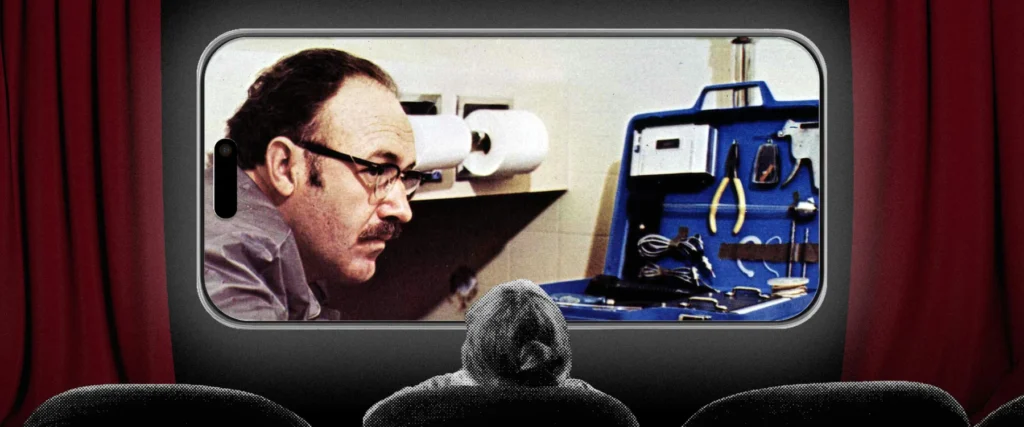

Recently, The Atlantic ignited significant discussion with an article titled "The Film Students Who Can No Longer Sit Through Films," which brought to light a growing concern among educators nationwide. The piece, authored by Rose Horowitch, meticulously documented a disquieting trend: an increasing number of university students, even those specializing in film studies, are struggling to maintain focus through feature-length movies. This phenomenon, once considered an anomaly, is now reportedly widespread, prompting a deeper examination into the underlying causes and broader implications for cognitive functions in the digital age.

The Unraveling of Focus: An Academic Challenge

The observations presented in The Atlantic article are far from isolated anecdotes. Film professors across the United States are vocalizing their frustrations, noting a stark decline in students’ ability to engage with cinematic works for extended periods. Craig Erpelding, a film professor at the University of Wisconsin at Madison, expressed his bewilderment, stating, "I used to think, if homework is watching a movie, that is the best homework ever. But students will not do it." This sentiment was echoed by approximately 20 film-studies professors interviewed for the article, indicating a systemic challenge that has become particularly pronounced over the last decade and intensified since the onset of the global pandemic. The irony is palpable: students aspiring to careers in film are reportedly finding it arduous to perform the fundamental act of watching a film, suggesting a profound shift in attentional capacities.

This educational hurdle extends beyond the specific discipline of film studies. Educators in various fields, from literature to history, have reported similar struggles with student engagement in sustained reading or lecture attendance, pointing to a more generalized erosion of sustained attention. While the visual nature of film makes the problem acutely visible in this context, the underlying cognitive shifts are likely impacting academic performance and learning across the curriculum.

The Smartphone’s Silent Assault on Cognitive Patience

The primary culprit identified by educators and researchers alike for this attention span crisis is the ubiquitous smartphone. These devices, integral to modern life, are implicated in diminishing what reading scholar Maryanne Wolf terms "cognitive patience." Defined as "the ability to maintain focused and sustained attention and delay gratification, while refraining from multitasking," cognitive patience is a cornerstone of deep learning and critical thinking.

The mechanism by which smartphones undermine this crucial ability is rooted in neurobiology. Constant interaction with digital devices activates specific neuronal bundles within the brain’s short-term reward system. These bundles, highly attuned to novelty and immediate gratification, anticipate a high expected value from checking the device—whether it’s a new notification, a social media update, or a quick search. This anticipation triggers a cascade of neurochemicals, primarily dopamine, which creates a powerful sensation of motivation to grab the phone. Each successful interaction reinforces this neural pathway, effectively "voting" for the distracting behavior. Over time, the continuous activation of this reward system and the subsequent lack of practice in sustained, non-interrupted attention lead to a diminished capacity for cognitive patience. The brain becomes rewired to prefer short, frequent bursts of stimulation over prolonged, focused engagement, making activities like watching a two-hour film or reading a complex text increasingly challenging.

Attempts by institutions to mitigate this issue have often met with limited success. The founding director of Tufts University’s Film and Media Studies program, for instance, recounted the futility of banning electronics during screenings. Despite explicit rules, "About half the class ends up looking furtively at their phones," she observed, highlighting the compulsive nature of the behavior. Similarly, a Cinema and Media Studies professor at USC likened his students’ fidgeting and discomfort without their phones to "nicotine addicts going through withdrawal," underscoring the deep-seated physiological and psychological dependence that has developed. This dependency is not merely a matter of willpower but a deeply ingrained habit reinforced by the very architecture of modern digital platforms designed to maximize engagement through intermittent rewards.

Reclaiming Attention: A Cinematic Prescription

Recognizing the pervasive nature of this attention deficit, some experts are proposing innovative strategies to combat it. One such suggestion, articulated in a recent podcast discussing The Atlantic‘s findings, advocates for transforming the act of watching a full-length film into a deliberate training goal for attention autonomy. This approach frames cinematic engagement not merely as entertainment or academic requirement, but as a therapeutic exercise—a "cinematic prescription" for a digitally fragmented mind.

The rationale behind this proposal is sound: like a new runner gradually building stamina to complete a 5k, the ability to sit through an entire film offers a challenging yet attainable milestone. It provides a concrete, measurable objective for individuals seeking to rebuild their capacity for sustained focus. This method encourages a conscious pushback against the default mode of digital distraction, offering a structured pathway to re-engage with long-form content.

To effectively pursue this goal of improving "cinematic cognitive patience," several strategies can be employed. Firstly, creating a dedicated, distraction-free environment is paramount. This means dimming lights, silencing all notifications, and ideally, placing the smartphone in another room or out of immediate reach. The physical removal of the tempting device reduces the neuronal "voting" for distraction. Secondly, active viewing techniques can enhance engagement. Instead of passively consuming, viewers can take notes, reflect on themes, or discuss the film with others immediately after viewing, transforming passive consumption into an active learning experience. Finally, starting with films known for their compelling narratives or visual artistry, and gradually increasing the duration or complexity, can help build endurance. The irony of using one screen (a television or projector) to combat the distracting influence of another (a smartphone) is not lost on proponents of this method. However, the distinction lies in the intentionality and the nature of engagement—one is designed for sustained narrative immersion, the other for fragmented, intermittent interaction. This deliberate re-engagement with film can serve as a powerful metaphor and practical tool for individuals striving to regain control over their attention in an increasingly fragmented digital world.

Beyond the Buzz: Deconstructing AI’s Real Impact on the Workforce

In parallel with the discourse on digital attention, the media landscape is frequently dominated by discussions surrounding artificial intelligence, often presented with a similar sense of urgency and, at times, alarm. This phenomenon, termed "AI vibe reporting," refers to a style of journalism that prioritizes speculative, emotionally charged narratives over evidence-based analysis, frequently leading to unnecessary public anxiety. A recent example of this was another Atlantic article titled "The Worst-Case Future for White-Collar Workers," which, while containing valuable insights in its later sections, commenced with opening statements illustrative of this trend.

The article’s initial framing, focusing on dire predictions without immediate factual grounding, exemplifies the dangers of "vibe reporting." For instance, quotes suggesting an imminent and widespread collapse of white-collar employment due to AI often overlook the current limitations of the technology and the complex, iterative nature of economic shifts. While generative AI undeniably holds the potential for broad disruptions, its present-day impact on the job market is far from the catastrophic scenarios frequently depicted. This approach can create a distorted public perception, fueling fear rather than fostering informed understanding.

The Mechanics of "Vibe Reporting"

"Vibe reporting" often leverages dramatic headlines and anecdotal evidence, sometimes extrapolating from nascent technologies to widespread societal change prematurely. This journalistic style tends to focus on what might happen in a worst-case scenario, rather than what is demonstrably happening or is likely to happen in the near term, based on empirical data. This is particularly prevalent in the rapidly evolving field of AI, where groundbreaking research quickly translates into public discourse, often bypassing the rigorous scrutiny needed for nuanced understanding.

The background context for such reporting often lies in the historical pattern of public reaction to transformative technologies. From the industrial revolution to the advent of the internet, new innovations have consistently generated both immense excitement and profound anxieties about job displacement. The current AI wave, marked by the public release of highly capable generative models like ChatGPT in late 2022, has accelerated this cycle. This accessibility has brought AI from specialized research labs into everyday consciousness, making it a fertile ground for both genuine scientific exploration and speculative media narratives. The challenge for the public, therefore, is to discern between well-researched analysis and sensationalized predictions.

The Current State of AI Integration and Employment

What is actually occurring with AI and jobs presents a more intricate picture than "vibe reporting" suggests. While generative AI is indeed a powerful tool, its integration into the workforce is, at present, largely characterized by augmentation rather than outright replacement. The first significant shifts are anticipated in sectors heavily reliant on routine, predictable tasks, with software development frequently cited as an early adopter. However, even within this field, the magnitude and nature of the disruption remain unclear.

Initial reports from ongoing research, including a large-scale project surveying over 300 computer programmers on their current AI usage, suggest a complex reality. While AI tools are undoubtedly increasing productivity for some tasks, they are also introducing new challenges, requiring skill adaptations, and redefining workflows. This points towards a future where human expertise remains crucial, but is significantly enhanced by AI, rather than rendered obsolete. Economists and labor market analysts generally agree that job displacement will occur, but it will likely be a gradual process, accompanied by the creation of new roles and the transformation of existing ones. The timeline for these broad disruptions is still a subject of intense debate, with most experts indicating a multi-year, if not multi-decade, horizon for significant structural changes.

Official responses from governments and international organizations are increasingly focusing on education, reskilling initiatives, and policy frameworks to manage the transition, acknowledging the potential for change without endorsing the most alarmist predictions. This balanced approach emphasizes proactive preparation over reactive panic.

Navigating the Digital Information Landscape

The dual challenges of a diminishing attention span and the proliferation of "AI vibe reporting" underscore a critical need for enhanced digital literacy and media discernment in contemporary society. Just as individuals must consciously reclaim their cognitive patience from the allure of immediate digital gratification, they must also critically evaluate the information presented to them, particularly concerning complex technological advancements like AI.

The actual developments in AI and their implications for society are significant enough to warrant serious, fact-based reporting. There is no need for journalists to construct narratives that lean heavily on speculation or work backward from preconceived notions of future trends. While hypothetical explorations, such as those found in the latter parts of The Atlantic article discussing governmental responses to potential economic disruptions, are valuable and contribute to informed public discourse, they must be clearly distinguished from current realities or immediate forecasts.

The ability to sustain attention is fundamental to critical thinking, deep learning, and thoughtful engagement with complex issues—qualities essential for navigating both the educational landscape and the evolving technological frontier. Cultivating cognitive patience and developing a discerning eye for media narratives are not merely individual pursuits but collective imperatives for fostering a well-informed and resilient society in the digital age. By understanding the mechanisms of digital distraction and recognizing the pitfalls of sensationalized reporting, individuals can empower themselves to make more informed choices, both in how they engage with content and how they interpret the accelerating pace of technological change.