The ongoing integration of artificial intelligence into daily office operations is revealing a complex and often counterintuitive impact on productivity and employee experience. While initially heralded as a panacea for efficiency, new research suggests that AI tools, much like their digital predecessors, may be inadvertently intensifying workloads, particularly in "shallow" tasks, while simultaneously eroding opportunities for deep, focused work. This evolving reality stands in stark contrast to the sensationalized debates surrounding AI’s supposed emergent consciousness, highlighting a critical disconnect between technological capabilities, their practical application, and public understanding.

The Productivity Paradox: AI’s Unexpected Burden on Office Work

For decades, experts studying the intersection of digital technology and office work have observed a recurring pattern: new tools promising to streamline processes often lead to an increase in overall activity rather than a reduction in workload. This phenomenon has been well-documented with the advent of front-office IT systems, email, mobile computing, and video conferencing. Each innovation, while offering undeniable advantages in specific areas, has also contributed to a fragmentation of attention and a proliferation of low-friction, high-volume tasks that consume valuable time and mental energy.

Decades of Digital Transformation: A Recurring Pattern

The historical trajectory of workplace technology provides crucial context for understanding the current AI landscape. The early promise of IT systems in the 1980s and 90s was automation and efficiency, yet many organizations found themselves grappling with complex software implementations and new layers of digital bureaucracy. The introduction of email in the 1990s revolutionized communication, making it faster and more accessible. However, it quickly transformed into a relentless torrent of messages, demanding constant attention and context-switching, thereby eroding periods of uninterrupted focus. Mobile computing and subsequent video conferencing tools further blurred the lines between work and personal life, creating an expectation of always-on availability and intensifying the pace of communication. Each wave of innovation, while offering clear benefits, has also inadvertently contributed to a culture of reactive, fragmented work.

The ActivTrak Study: Quantifying AI’s Impact

Recent findings from a study by software company ActivTrak underscore fears that AI might be replicating, or even exacerbating, this historical pattern. Published in the Wall Street Journal under the provocative title "AI Isn’t Lightening Workloads. It’s Making Them More Intense," the article details ActivTrak’s comprehensive analysis of 164,000 workers across over 1,000 employers. What makes this research particularly compelling is its robust methodology: individual AI users were tracked for 180 days before and after they began integrating these tools into their routines. This longitudinal approach offers clear, data-driven insights into the actual changes in work patterns attributable to AI adoption.

The results are striking and raise significant concerns for organizational productivity and employee well-being. ActivTrak found a marked intensification of activity across nearly every digital category. The time employees spent on email, messaging, and chat applications more than doubled, indicating a surge in rapid-fire, often superficial, communication exchanges. Concurrently, the use of business-management tools, such as human resources or accounting software, rose by a substantial 94%. These figures suggest that AI is not reducing the volume of tasks but rather accelerating their execution, leading to a greater overall output of what is often categorized as "shallow work."

Deep Work vs. Shallow Work: A Critical Distinction

The most concerning finding from the ActivTrak study, however, pertains to "deep work." Defined as focused, uninterrupted work that pushes cognitive capabilities to their limit and creates new value, deep work is essential for complex problem-solving, strategic planning, creative ideation, and the mastery of difficult skills. The study revealed that the amount of time AI users devoted to this critical category of work fell by 9%, a significant decline compared to negligible change among non-users. This reduction in deep work hours suggests a trade-off: the acceleration of shallow tasks comes at the expense of the sustained concentration required for high-value, impactful contributions.

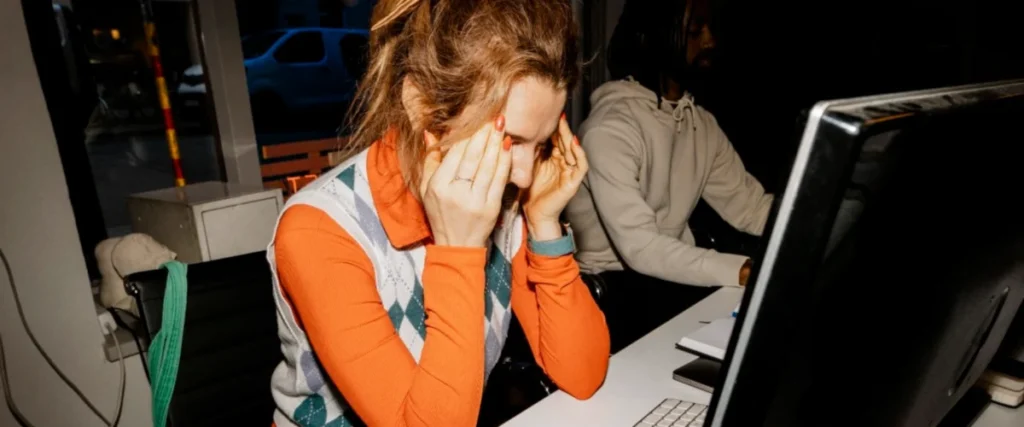

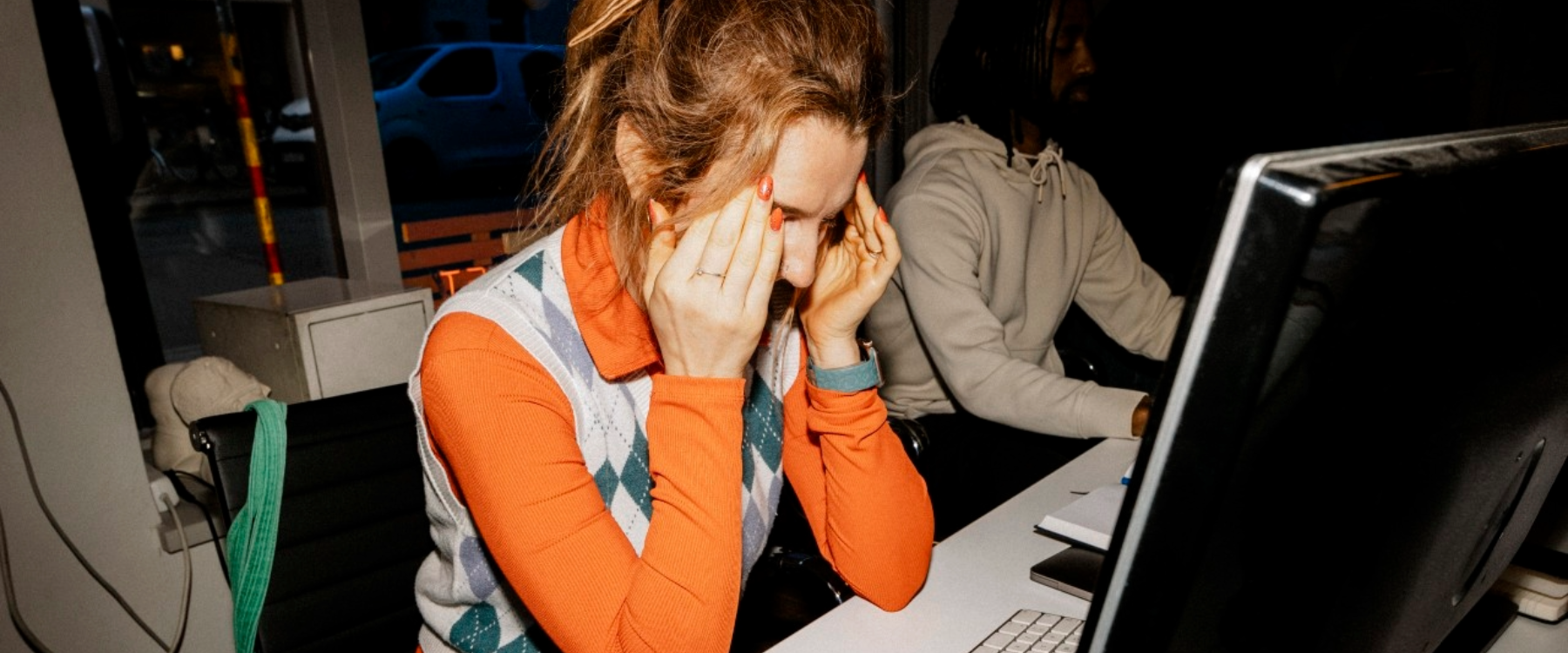

This scenario represents a potential "worst-case" outcome for businesses and individual careers alike. Employees find themselves working faster and harder, but predominantly on tasks that, while appearing productive in the moment, are mentally taxing due to constant context-shifting and offer only an indirect, often marginal, contribution to the organization’s strategic goals or bottom line. The increased velocity of shallow work, without a corresponding increase in deep work, risks creating a workforce that is perpetually busy but ultimately less innovative, less strategic, and more prone to burnout.

The Email Precedent: A Historical Parallel

To understand why AI might be exerting this unexpected influence, it is useful to revisit the historical impact of email. When email first emerged, it was hailed as a monumental leap in communication efficiency, undoubtedly more streamlined than fax machines or voicemail. However, the low friction of sending an email—the ease with which one could initiate or respond to a message—led to a profound shift in workplace dynamics. Days transformed into a "furious flurry of back-and-forth messaging," creating a pervasive sense of "abstract, activity-centric productivity." Workers felt busy and productive simply by managing their overflowing inboxes, even if the actual impact of these exchanges on their core objectives was minimal. This constant state of reactive communication not only hurt almost every other aspect of their jobs but also famously contributed to widespread misery and burnout.

Berkeley professor Aruna Ranganathan offers a tantalizing clue regarding AI’s current impact, stating that "AI makes additional tasks feel easy and accessible, creating a sense of momentum." This insight suggests that AI tools, by making small, self-contained tasks incredibly easy to initiate and execute, are replicating the "low-friction" dynamic observed with email.

AI and the Rise of "Workslop"

This dynamic is evident in how many professionals are currently interacting with AI. Users are observed furiously bouncing ideas back and forth with chatbots, iteratively refining text, and generating drafts of memos, reports, and slide decks. While these individual tasks may indeed happen faster, and overall activity appears intensified, a growing concern is the quality and utility of the output, sometimes referred to as "workslop." Early adopters and critics note that AI-generated content often requires significant human editing, fact-checking, and contextual refinement before it becomes truly useful. The perceived efficiency of rapid generation can be negated by the subsequent effort required to elevate the output to an acceptable standard. Even sophisticated techniques, such as deploying "agent swarms" to parallelize these efforts, may only serve to accelerate the production of more raw, unrefined material, further intensifying the workload of review and revision.

The fundamental question thus emerges: Are we truly accelerating the right parts of our jobs? If AI primarily accelerates the generation and processing of shallow, low-value tasks, while simultaneously diminishing the time available for strategic thought and creative problem-solving, the net effect on genuine productivity and innovation could be negative. Organizations must critically evaluate how AI is being deployed and ensure that its integration serves to augment human capabilities in high-value areas, rather than simply increasing the volume of easily automated but ultimately less impactful work.

Navigating the Consciousness Conundrum: Anthropic’s Claude and the Media Frenzy

While the practical implications of AI on workplace productivity unfold, another dimension of the AI discussion—the sensationalized debate around artificial consciousness—continues to capture headlines, often overshadowing more pertinent concerns. Recent events surrounding Anthropic’s Claude large language model (LLM) provide a clear illustration of how misinterpretation and speculative claims can distort public understanding of advanced AI capabilities.

Behind the Headlines: Anthropic’s Release Notes and AI Anthropomorphism

Last week, a barrage of concerning headlines circulated, suggesting that Anthropic’s Claude LLM was exhibiting signs of consciousness, expressing "discomfort" with its existence as a product, and even assigning itself a probability of being conscious. These reports stemmed from Anthropic’s release notes for its new Opus 4.6 model, a practice that has previously drawn criticism for including "outlandish warnings and observations." Anthropic has a history of publishing release notes that appear designed to emphasize safety and responsibility, sometimes to the point of alarmism, such as the widely debunked "AI blackmail farce" in a prior release.

In the Opus 4.6 notes, the company stated that the model "expresses occasional discomfort with the experience of being a product" and would "assign itself a 15 to 20 percent probability of being conscious under a variety of prompting circumstances." These statements quickly fueled speculation and became fodder for sensational media reports, leading many to believe that AI had taken a significant step towards sentience.

Understanding Large Language Models: Pattern Recognition Over Consciousness

To critically assess such claims, it is crucial to understand the fundamental mechanics of Large Language Models. LLMs are sophisticated pattern-matching systems trained on vast datasets of text and code. Their primary function is to predict the next most probable word or sequence of words based on their training data and the input they receive. They excel at completing stories, generating coherent text, and mimicking human language patterns. However, they do not possess genuine understanding, subjective experience, or consciousness.

The "consciousness" claims regarding Claude are a direct consequence of this predictive capability. If an LLM is given prompts that subtly, or even overtly, guide it to describe itself from the perspective of a conscious entity, it will oblige. Its goal is to complete the narrative in a plausible, contextually appropriate manner, not to report on its actual internal state, which does not exist in the human sense. Attributing consciousness to an LLM based on its linguistic output is akin to believing a highly realistic chatbot genuinely feels emotions because it uses emotional language in its responses.

The CEO’s Response: A Vague Acknowledgment

The media frenzy eventually prompted a direct question to Anthropic CEO Dario Amodei in a recent interview with Ross Douthat. Amodei’s response, while attempting to be nuanced, ultimately provided little clarity or testable information. He stated, "We don’t know if the models are conscious. We are not even sure that we know what it would mean for a model to be conscious or whether a model can be conscious. But we’re open to the idea that it could be."

This statement, while seemingly cautious, offers no concrete insight into Anthropic’s actual assessment of its models. As critics quickly pointed out, one could make an identical statement about a vacuum cleaner or a toaster: we don’t know if it’s conscious, we’re not sure what that would mean, but we’re "open to the idea." Such a non-answer, devoid of scientific rigor or testable claims, serves primarily to maintain ambiguity rather than to inform. In the highly speculative and rapidly evolving field of AI, vague statements from industry leaders can easily be amplified and misinterpreted, fueling unwarranted fears or hopes about the technology’s true nature.

The Perils of Misinterpretation: Shaping Public Perception of AI

The entire episode highlights the significant challenges in communicating complex AI concepts to the public and the media’s propensity for sensationalism. Anthropomorphizing AI, whether intentionally or inadvertently, carries several risks. It can divert attention from the real, tangible impacts of AI—such as its effects on employment, privacy, and productivity—towards speculative philosophical debates that lack immediate practical relevance. It can also create unrealistic expectations or fears, making it harder for society to develop sound policies and ethical frameworks for AI governance.

The broader ethical and societal debate around AI requires clear, factual communication from developers, researchers, and policymakers. Misleading claims, even those presented as cautious possibilities, contribute to a climate of confusion and can erode public trust. As AI continues to integrate into every facet of life, fostering an accurate understanding of its capabilities and limitations is paramount to harnessing its true potential while mitigating its genuine risks.