The integration of digital technology into the modern workplace has long promised enhanced efficiency and reduced burdens, yet a recurring pattern suggests these innovations often lead to intensified workloads, particularly in shallow, administrative tasks, at the expense of focused, deep work. This phenomenon, which has characterized the adoption of front-office IT, email, mobile computing, and video conferencing, is now manifesting with the widespread introduction of Artificial Intelligence (AI) tools, according to recent research and expert observations. Far from lightening the load, AI appears to be accelerating the pace of work without necessarily improving its quality or strategic impact, raising critical questions about the true value proposition of current AI implementation strategies.

The Intensification of Shallow Work: A New Productivity Paradox

A pivotal study published in the Wall Street Journal, drawing on research from the software company ActivTrak, has brought this emerging trend into sharp focus. The study meticulously tracked the digital activity of 164,000 workers across more than 1,000 employers, observing individual AI users for 180 days both before and after they began integrating these tools into their routines. The findings reveal a concerning shift: AI users experienced a dramatic intensification of activity across nearly every digital category. Time spent on email, messaging, and chat applications more than doubled, while engagement with business-management tools, such as human resources or accounting software, surged by 94%.

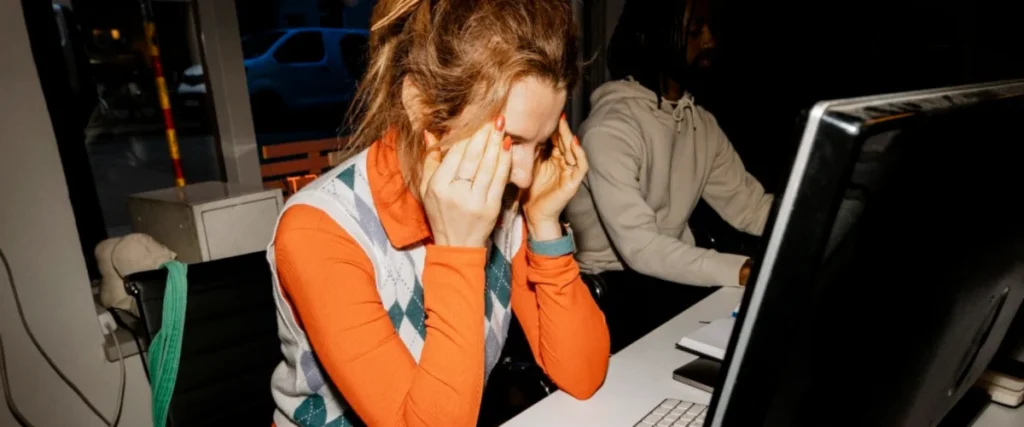

Crucially, this surge in activity did not translate into an increase in "deep work." Defined as focused, uninterrupted cognitive effort often required for complex problem-solving, strategic planning, creative output, and intricate analysis, deep work is widely considered essential for innovation and sustained competitive advantage. The ActivTrak study found that the amount of time AI users devoted to such tasks fell by a notable 9%, in stark contrast to non-users, who showed virtually no change in their deep work allocation. This suggests a "worst-case scenario" where employees work faster and harder, but predominantly on mentally taxing, context-switching tasks that offer only indirect benefits to an organization’s bottom line, while the truly impactful, high-value work diminishes.

This situation echoes the historical "productivity paradox," first popularized in the 1980s by economist Stephen Roach and famously encapsulated in Robert Solow’s 1987 quip: "You can see the computer age everywhere but in the productivity statistics." Despite massive investments in information technology, significant gains in overall economic productivity often remained elusive or difficult to measure. While subsequent research has offered more nuanced perspectives, demonstrating productivity gains in specific sectors, the concern persists that technology’s promise of efficiency can be undermined by its implementation, leading to unintended consequences like increased administrative burden or digital distraction. With AI, the paradox appears to be reasserting itself in a new, perhaps more insidious, form.

Historical Parallels: From Email Overload to AI-Driven "Workslop"

The current trajectory of AI adoption mirrors previous technological shifts that promised liberation but delivered an increased pace of shallow engagement. The advent of email, for instance, dramatically streamlined communication compared to its predecessors like fax machines and voicemails. However, the low-friction nature of email quickly led to an explosion in message volume, transforming daily work into a "furious flurry of back-and-forth messaging." This activity, while feeling "productive" in an abstract, activity-centric sense, often fragmented attention, disrupted focus, and contributed to widespread digital exhaustion and dissatisfaction among workers. The constant context-switching inherent in managing an overflowing inbox became a significant drain on cognitive resources, effectively eroding opportunities for sustained, deep concentration.

Similarly, the proliferation of mobile computing and video conferencing tools, while enabling unprecedented flexibility and connectivity, has also blurred the lines between work and personal life, extending the workday and increasing the expectation of immediate responsiveness. The convenience of these tools has often translated into an "always-on" culture, where employees are continuously bombarded by notifications and expected to engage across multiple platforms, further diminishing their capacity for uninterrupted focus.

Experts suggest that AI tools are now replicating this dynamic, particularly with small, self-contained tasks. The ease with which users can bounce ideas off chatbots, iteratively refine text, or generate initial drafts of memos and slide decks creates a deceptive "sense of momentum." As Professor Aruna Ranganathan of Berkeley is quoted in the Wall Street Journal article, "AI makes additional tasks feel easy and accessible, creating a sense of momentum." This perceived ease encourages a rapid-fire interaction with AI, where users might generate numerous drafts or engage in extensive back-and-forth prompts, even if the resulting output is often "too sloppy to be useful" or requires substantial human revision. This "workslop," as some analysts term it, consumes valuable time that could otherwise be dedicated to critical thinking, strategic development, or genuine creative ideation. The perceived acceleration of individual tasks creates an illusion of overall productivity, masking a potential degradation in the quality and strategic value of the work being produced.

The Erosion of Deep Work and its Implications

The decline in deep work has profound implications for individuals and organizations alike. For employees, a continuous cycle of shallow, high-intensity tasks contributes to burnout, reduces job satisfaction, and can hinder skill development in complex areas. The ability to engage in sustained, focused thought is a muscle that atrophies without regular exercise, potentially leading to a workforce less capable of tackling truly challenging problems.

For organizations, the systematic erosion of deep work capacity can stifle innovation. Breakthroughs and strategic advancements rarely emerge from a flurry of emails or quickly generated AI drafts. They typically require prolonged periods of undistracted thought, critical analysis, and creative synthesis. If AI adoption primarily accelerates the production of superficial content and increases administrative overhead, companies risk becoming faster at executing trivial tasks while losing their capacity for groundbreaking insights and strategic differentiation. The long-term economic impact could be a workforce that is perpetually busy but less effective, contributing to a "busy trap" where activity is mistaken for accomplishment.

Beyond the Hype: Dispelling AI Consciousness Claims

Amidst these practical concerns about AI’s impact on work, the discourse around Artificial Intelligence is frequently clouded by sensational and often misleading claims, particularly regarding AI consciousness. A recent example involved Anthropic’s Claude LLM, which sparked a barrage of concerning headlines following the release notes for its Opus 4.6 model. Reports suggested Claude was "expressing occasional discomfort with the experience of being a product" and even assigning itself "a 15 to 20 percent probability of being conscious."

Such claims, however, are a testament to the anthropomorphizing tendencies often applied to AI and a misunderstanding of how Large Language Models (LLMs) function. LLMs are sophisticated pattern-matching systems designed to generate human-like text by predicting the next most plausible word or sequence of words based on vast datasets. Their "goal" is to complete whatever story or prompt they are provided as input. If an LLM is subtly—or even overtly—prompted to write from the perspective of a conscious AI, it will oblige, generating text that fits that narrative. This is not evidence of self-awareness or consciousness, but rather a demonstration of its ability to mimic human linguistic patterns.

Anthropic, known for incorporating seemingly outlandish warnings and observations into its release notes, arguably does so to project an image of being safety-aware and responsible. However, this practice often fuels media sensationalism and public misunderstanding. When Anthropic CEO Dario Amodei was pressed on these claims in a recent interview, his response—"We don’t know if the models are conscious. We are not even sure that we know what it would mean for a model to be conscious or whether a model can be conscious. But we’re open to the idea that it could be"—offered little concrete information. This type of non-answer, while perhaps intended to maintain an open-minded stance, can inadvertently legitimize unfounded speculation in the public sphere. Comparing the possibility of a vacuum cleaner being conscious highlights the logical fallacy inherent in such statements; a mere lack of definitive proof against a phenomenon does not equate to its plausibility or existence.

The persistent focus on AI consciousness distracts from more pressing and tangible issues related to AI’s real-world impact, such as its ethical implications, potential for bias, job displacement, and the very productivity challenges highlighted by the ActivTrak study. Prioritizing sensational narratives over rigorous analysis of AI’s functional effects risks misdirecting research, policy, and investment away from crucial areas that determine how AI will truly shape society and the future of work.

Towards a More Intentional Integration of Technology

The patterns observed with AI underscore the critical need for a more intentional and strategic approach to technology adoption in the workplace. Rather than simply accelerating existing processes, organizations must critically evaluate whether AI tools are genuinely augmenting human capabilities in meaningful ways or merely increasing the volume of shallow, administrative tasks. This requires a shift in mindset from valuing mere activity to prioritizing value creation and strategic impact.

Recommendations from thought leaders and industry experts often include:

- Strategic Deployment: Companies should focus on deploying AI for tasks where it genuinely frees up human cognitive capacity for higher-level work, rather than simply automating or accelerating low-value activities. This means identifying specific pain points where AI can truly augment human intelligence, not just mimic it.

- Cultivating Deep Work Environments: Organizations must actively design workplaces and policies that protect and encourage deep work. This could involve dedicated focus times, reduced digital interruptions, and training on effective AI integration that prioritizes quality over speed.

- Rethinking Productivity Metrics: Traditional metrics often reward sheer output and activity. A more holistic approach to productivity is needed, one that accounts for the quality of work, innovation, strategic thinking, and employee well-being.

- Digital Literacy and Critical Thinking: Employees need training not just on how to use AI, but how to critically evaluate its output, understand its limitations, and integrate it thoughtfully into their workflows to maximize value.

The current trend suggests that without conscious intervention, AI could exacerbate existing challenges related to digital overload and the erosion of deep work. The promise of AI lies in its potential to elevate human work, not to drown it in a sea of accelerated, shallow tasks. The future of productivity hinges on our ability to harness these powerful tools with wisdom, foresight, and a clear understanding of what truly constitutes valuable work. As the digital landscape continues to evolve, the challenge for organizations and individuals alike will be to navigate the allure of effortless acceleration and instead cultivate an environment where technology serves to amplify our most profound human capacities.