A groundbreaking study published in Neural Computation by researchers from the Okinawa Institute of Science and Technology (OIST) reveals that artificial intelligence systems can significantly enhance their learning capabilities and adaptability by adopting a human-like practice: internal dialogue. This novel approach, which integrates self-directed "inner speech" with a sophisticated working memory system, has demonstrated marked improvements in how AI models organize ideas, weigh choices, and process information, mirroring the cognitive benefits of introspection in humans. The findings suggest a pivotal shift in AI training methodologies, emphasizing the crucial role of self-interaction dynamics in fostering more robust and versatile intelligent systems.

The Human Blueprint for Cognition: Inner Speech and Its Role

For centuries, philosophers and psychologists have pondered the nature of human inner speech, often described as the silent conversation we hold with ourselves. This internal monologue is far more than a quirk; it’s a fundamental cognitive tool. It enables individuals to rehearse actions, plan complex sequences, evaluate past experiences, and manage emotions. From organizing thoughts before a presentation to silently deliberating a difficult decision, inner speech is integral to problem-solving, self-regulation, and learning. It allows for a meta-cognitive process, where individuals can reflect on their own thinking, identify gaps in understanding, and construct more coherent mental models.

The OIST team, led by Dr. Jeffrey Queißer, Staff Scientist in OIST’s Cognitive Neurorobotics Research Unit, drew direct inspiration from this uniquely human attribute. "This study highlights the importance of self-interactions in how we learn," explains Dr. Queißer. "By structuring training data in a way that teaches our system to talk to itself, we show that learning is shaped not only by the architecture of our AI systems, but by the interaction dynamics embedded within our training procedures." This insight posits that the process of learning, particularly how an AI system internally processes and reiterates information to itself, can be as critical as its underlying neural network structure.

Addressing AI’s Grand Challenge: Generalization and Data Efficiency

One of the most persistent challenges in the field of artificial intelligence is achieving true generalization. Modern AI models, particularly large language models and deep learning networks, often require vast amounts of meticulously labeled data to learn specific tasks. While they excel within the confines of their training data, their performance can degrade dramatically when confronted with unfamiliar situations or novel contexts—a phenomenon often termed "brittleness" or a lack of "content-agnostic information processing." Humans, by contrast, possess an inherent ability for rapid task switching and solving unfamiliar problems with remarkable ease, drawing on general principles rather than rote memorization.

"Rapid task switching and solving unfamiliar problems is something we humans do easily every day. But for AI, it’s much more challenging," notes Dr. Queißer. This discrepancy underscores a critical bottleneck in AI development, hindering its seamless integration into dynamic, real-world environments. Furthermore, the sheer scale of data required for state-of-the-art AI models presents significant computational, energy, and logistical burdens. Developing AI that can learn efficiently from sparse data—a scenario far more common in practical applications—is therefore a paramount goal for researchers. The OIST research offers a promising avenue to tackle both these issues by instilling a more adaptable, human-like learning mechanism.

The Methodology: Blending Inner Speech with Working Memory

The OIST researchers approached this problem with a distinct interdisciplinary mindset, bridging insights from developmental neuroscience and psychology with cutting-edge machine learning and robotics. Their methodology centered on two key components: self-directed internal speech and a specialized working memory system.

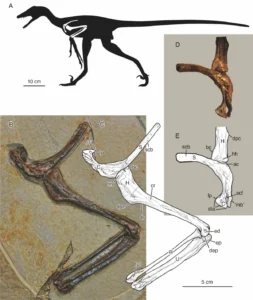

Working memory, a concept central to cognitive psychology, refers to the brain’s short-term ability to hold and manipulate information actively for a brief period. It’s what allows us to follow a sequence of instructions, perform mental arithmetic, or remember the beginning of a sentence while processing its end. In AI, working memory systems are designed to temporarily store and access relevant data during a task, preventing the need to re-process information from scratch. The OIST team began by scrutinizing various working memory architectures within AI models, testing their performance across tasks of varying complexity. They discovered that models equipped with multiple "slots" or temporary containers for information exhibited superior performance on challenging problems that demanded the simultaneous manipulation of several data points, such as reversing sequences or recreating intricate patterns.

The truly innovative step, however, was the introduction of self-directed internal speech. Described by the researchers as a quiet "mumbling" within the AI system, this mechanism involved setting targets that encouraged the AI to engage in internal reiteration or processing a specific number of times during a task. This internal dialogue allowed the AI to reflect on its current state, rehearse potential actions, and consolidate information within its working memory before committing to an output.

Empirical Evidence: Clear Gains in Performance and Flexibility

The results of the study were compelling. When the self-directed internal speech mechanism was integrated with the multi-slot working memory system, the AI models demonstrated significant performance improvements across a spectrum of tasks. The most pronounced gains were observed in scenarios requiring multitasking and those involving multiple sequential steps. For instance, an AI trained with this method could more efficiently switch between distinct tasks, apply learned principles to novel variations of problems, and process complex instructions without needing extensive re-training.

Compared to systems that relied solely on memory without the added dimension of internal dialogue, the combined approach yielded clear advantages in flexibility and overall operational efficiency. This enhancement is not merely incremental; it suggests a qualitative leap in AI’s capacity for adaptive behavior. The "mumbling" essentially provided the AI with a mechanism for deeper internal processing, allowing it to organize its thoughts, re-evaluate its current state, and make more informed decisions, akin to a human deliberating a problem internally.

Crucially, this combined system proved remarkably effective even with sparse data sets—a significant departure from the typical data-hungry nature of many contemporary AI models. "Our combined system is particularly exciting because it can work with sparse data instead of the extensive data sets usually required to train such models for generalization. It provides a complementary, lightweight alternative," states Dr. Queißer. This aspect holds immense promise for deploying AI in environments where vast, perfectly curated datasets are unavailable or impractical to acquire, such as specialized scientific research, niche industrial applications, or rapidly evolving real-world scenarios.

Broader Implications and the Future of AI

The findings from OIST have profound implications for the future trajectory of AI research and development. This work represents a significant stride towards creating AI that is not only intelligent but also truly adaptive and resilient.

- Rethinking AI Architecture: The study challenges the prevailing focus solely on network architecture and pushes for a greater consideration of internal processing dynamics. Future AI designs may incorporate dedicated modules for internal dialogue and meta-cognition, making self-interaction a core component of learning.

- Enhanced Generalization: By enabling AI to build more robust internal representations and generalize from limited examples, this research paves the way for AI systems that can operate effectively in dynamic, unpredictable environments. This is critical for advancements in fields like autonomous robotics, medical diagnostics (where data can be sparse and sensitive), and personalized learning systems.

- Efficiency and Sustainability: The ability to learn effectively from sparse data has direct implications for reducing the computational and energy footprint of AI training. This could make advanced AI more accessible and sustainable, democratizing its development and deployment.

- Human-Robot Collaboration: AI that can better understand and adapt to human-like cognitive processes might lead to more intuitive and effective human-robot interaction. Robots that can "think aloud" internally could potentially explain their reasoning or adapt to human instructions more fluidly.

- Deepening Understanding of Human Cognition: Beyond its direct impact on AI, this interdisciplinary research also serves as a computational model for understanding human learning. By successfully replicating aspects of human inner speech in AI, researchers gain fundamental new insights into the mechanisms of human biology and behavior at a neural level. This cross-pollination of ideas between AI and cognitive neuroscience is a hallmark of OIST’s research philosophy.

Moving Beyond the Lab: AI in the Real World

Looking ahead, the OIST team is focused on transitioning their research from controlled laboratory environments to more realistic, complex conditions. "In the real world, we’re making decisions and solving problems in complex, noisy, dynamic environments. To better mirror human developmental learning, we need to account for these external factors," explains Dr. Queißer. This next phase will involve testing these "self-talking" AI systems in environments with sensory noise, incomplete information, and unpredictable changes, pushing the boundaries of their adaptability.

The ultimate vision for this research extends to practical applications that can profoundly impact daily life. Imagine household robots that can learn new tasks with minimal instruction, agricultural robots that adapt to varying field conditions, or intelligent assistants that can genuinely understand and anticipate user needs. By exploring phenomena like inner speech and unraveling its underlying mechanisms, the OIST team is not only advancing the frontier of artificial intelligence but also contributing to a deeper understanding of human cognition itself. "We can also apply this knowledge, for example in developing household or agricultural robots which can function in our complex, dynamic worlds," Dr. Queißer concludes, underscoring the tangible benefits that such foundational research can bring to society.

This development is anticipated to resonate widely within the AI research community, potentially influencing future benchmarks for AI performance and inspiring new avenues for addressing the long-standing challenges of generalization, data efficiency, and true cognitive flexibility in machine learning. The silent internal monologue, once thought to be exclusively human, is now proving to be a powerful catalyst for the evolution of artificial intelligence, bringing us closer to creating machines that learn and adapt with unprecedented sophistication.