Late last year, acclaimed fantasy novelist Brandon Sanderson delivered a compelling address titled "The Hidden Cost of AI Art" at Dragonsteel Nexus, the annual conference organized by his thriving media company. The talk, which resonated deeply within the creative community, provided a nuanced yet firm stance on the burgeoning field of generative artificial intelligence, particularly its implications for artistic creation. Sanderson’s discourse initiated a broader conversation about the intrinsic value of art, extending beyond its marketability to its profound impact on the human condition and the very definition of creativity in an increasingly automated world.

Sanderson, known globally for his intricate world-building in series like "Mistborn" and "The Stormlight Archive," and for his unprecedented success in crowdfunding projects, approached the topic with characteristic thoughtfulness. Early in his address, he articulated his position: "The surge of large language models and generative AI raises questions that are fascinating, and even if I dislike how the movement is going in relation to writing and art, I want to learn from the experience of what’s happening." This statement set the tone for an exploration that, while critical of AI-generated art, sought to understand the underlying reasons for his discomfort rather than merely dismissing the technology outright. He candidly admitted that the notion of AI-generated art causes his "stomach to turn," prompting him to delve deeper into the philosophical underpinnings of this visceral reaction.

The Inadequacy of Common Objections to AI Art

Sanderson systematically considered, and subsequently dismissed, a series of common objections frequently raised against AI art. These often include concerns about intellectual property theft, the potential for job displacement, the lack of true originality in AI outputs, or the argument that AI-generated works merely aggregate existing human creations without true innovation. While acknowledging the validity of these practical and ethical dilemmas, Sanderson suggested that these objections, though important, did not fully capture the profound unease he felt. He posited that the core issue lay elsewhere, in a more fundamental aspect of human endeavor. This methodical approach allowed him to strip away superficial arguments and pinpoint what he believes is the true "hidden cost."

Art as a Transformative Journey: Sanderson’s Core Revelation

Sanderson’s ultimate conclusion centered on a deeply personal insight: the transformative power of the creative process for the artist themselves. Drawing from his own struggles with early, ultimately unsuccessful book manuscripts, he articulated that the paramount value of art resides not merely in its final product, but in the journey of its creation. He elaborated, "Maybe someday the language models will be able to write books better than I can. But here’s the thing: Using those models in such a way absolutely misses the point, because it looks at art only as a product."

He recounted the profound satisfaction of completing his first novel, irrespective of its commercial success. "Why did I write [my first manuscript]?… It was for the satisfaction of having written a novel, feeling the accomplishment, and learning how to do it. I tell you right now, if you’ve never finished a project on this level, it’s one of the most sweet, beautiful, and transcendent moments. I was holding that manuscript, thinking to myself, ‘I did it. I did it.’" This powerful testimony underscores the idea that art is a crucible for personal growth, skill development, and self-discovery. The "hidden cost" of AI art, in this view, is the bypass of this invaluable human experience, reducing creation to a mere output rather than a deeply formative process. The iterative struggle, the problem-solving, the emotional investment—these are the elements that forge the artist and imbue the art with human essence, elements entirely absent in AI generation.

Art as Deep Human Communication: A Complementary Perspective

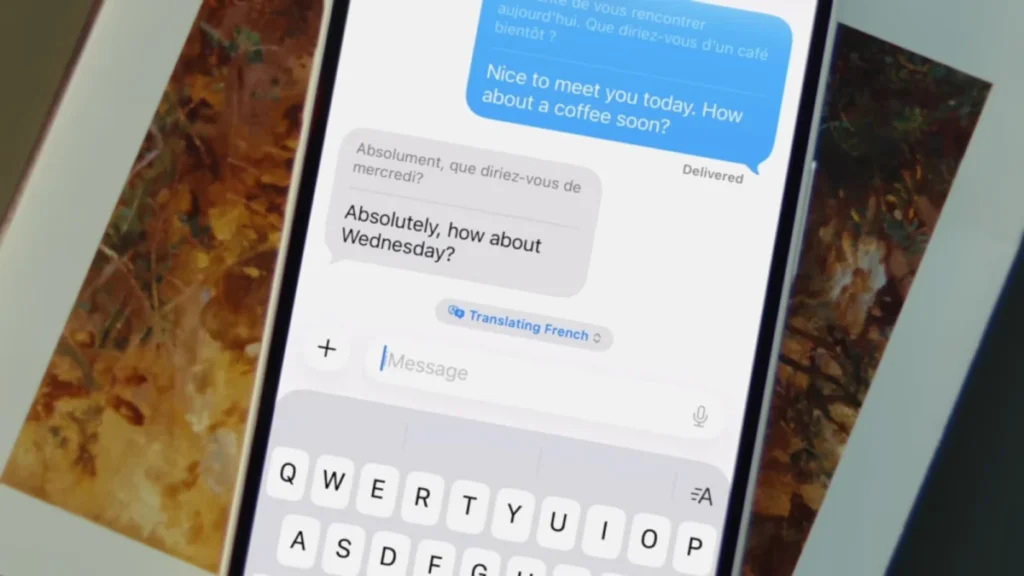

Complementing Sanderson’s focus on the artist’s transformative journey, another significant perspective prevalent in contemporary discourse views art as a profound act of human communication. Proponents of this view argue that art, whether a meticulously crafted page of prose, a vibrant painted canvas, or a haunting musical composition, serves as a tangible medium for transmitting complex internal cognitive states from one human mind to another. This act is often described as a form of "telepathy," where the artist encodes their experiences, emotions, and thoughts into a form that can be decoded and experienced by an audience, forging a unique connection across time and space.

From this standpoint, the idea of engaging with a book written entirely by a language model or watching a film generated solely from a textual prompt becomes intrinsically problematic, if not fundamentally "anti-human." Such creations, while potentially mimicking human artistic forms, lack the foundational element of a human consciousness attempting to convey its unique internal world. They might simulate the aesthetic, but they cannot transmit the authentic human experience. Critics argue that consuming AI-generated content in this context is akin to a "quixotic simulation of love," providing an illusion of connection without the genuine human intentionality that defines true artistic communication. This perspective reinforces Sanderson’s argument by highlighting that the value of art is not just in its creation process, but also in the unique form of inter-human connection it facilitates, a connection that AI, by its very nature, cannot replicate.

Human Agency and the Definition of Art

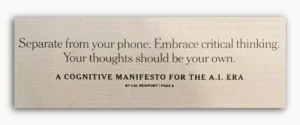

Perhaps the most impactful aspect of Sanderson’s address was his rousing conclusion, which pivoted from philosophical analysis to a powerful call to action regarding human agency. If art is indeed deeply human, he argued, then its definition and meaning ultimately rest with humanity itself. "That’s the great thing about art – we define it, and we give it meaning," he declared. "The machines can spit out manuscript after manuscript after manuscript. They can pile them to the pillars of heaven itself. But all we have to do is say ‘no.’"

This statement directly challenges a prevalent sentiment in much of the current commentary surrounding AI—a trend characterized by a "nihilistic passivity." This style of discourse often presents a grim, almost fatalistic scenario wherein AI inevitably disrupts or destroys sacred human domains, leaving little room for resistance or redirection. Sanderson’s perspective offers a powerful counter-narrative, reminding individuals and communities that they possess the ultimate authority to shape their existence, particularly in areas as fundamental as art and creativity. He asserted that the future of human creativity is not solely determined by the whims of tech magnates like Sam Altman of OpenAI or Dario Amodei of Anthropic, but by collective human choice and the assertion of shared values. The simple act of saying "no" becomes a profound exercise in defining boundaries and upholding the unique value of human-centric creation.

Broader Industry Context and Reactions

Sanderson’s sentiments resonate with a growing chorus of voices across various creative industries. The advent of sophisticated generative AI tools has ignited widespread concern among visual artists, illustrators, voice actors, musicians, and writers. Legal battles challenging the use of copyrighted material for AI training data are proliferating, with artists and authors filing lawsuits against AI developers for alleged infringement. Organizations like the Writers Guild of America (WGA) and SAG-AFTRA have highlighted AI as a critical issue in recent labor negotiations, seeking protections for human creators against algorithmic replication and displacement.

For instance, a 2023 survey by the Authors Guild found that a significant majority of its members were concerned about AI’s impact on their livelihoods and intellectual property. Similarly, artists on platforms like ArtStation have protested the inclusion of AI-generated images, advocating for clearer distinctions and ethical guidelines. Industry analysts project that without clear regulatory frameworks, the creative economy could face significant disruption, potentially devaluing human-made art and diminishing opportunities for emerging artists. The philosophical debate extends to art critics and ethicists who ponder the very definition of "authorship" and "originality" when algorithms can produce outputs that mimic human style with increasing fidelity. Some suggest a bifurcation of the art market, with a premium placed on "human-certified" creations, while others fear a race to the bottom where speed and quantity, driven by AI, overshadow quality and authenticity.

Implications for the Future of Art and Human Creativity

The debate sparked by figures like Brandon Sanderson has profound implications for the future trajectory of art and human creativity. It compels a re-evaluation of what societies truly value in artistic expression. If the "hidden cost" is indeed the loss of the artist’s transformative journey and the unique human connection inherent in art, then the collective response to AI must go beyond mere technological adaptation. It requires a conscious decision to champion and protect the human element.

This could manifest in various ways: consumers actively seeking out and supporting human-created art, platforms implementing clear labeling for AI-generated content, educational institutions emphasizing the creative process over purely product-oriented outcomes, and policymakers developing robust intellectual property laws that safeguard human creators. The challenge lies in navigating a rapidly evolving technological landscape without succumbing to technological determinism. Sanderson’s message is a powerful reminder that while technology can present new tools and challenges, the ultimate direction of human culture and the definition of its most cherished expressions remain firmly within human hands. The ongoing dialogue, enriched by perspectives such as Sanderson’s, is crucial for fostering a future where technological advancement serves, rather than diminishes, human creativity and its intrinsic value.

Correction and Clarification Regarding AI Vulnerability Reporting

In a recent episode of an "AI Reality Check" podcast, a statement was made concerning Anthropic’s Opus 4.6 Large Language Model (LLM) and its reported capability to identify software vulnerabilities. To ensure accuracy and provide precise context, a clarification is necessary regarding the specific wording and source of the information.

The original statement asserted: "If you go back and look at the release notes for Anthropic’s earlier, less powerful opus 4.6 LLM, they say the following: their researchers used Opus to find, quote, ‘over 500 exploitable zero-day vulnerabilities, some of which are decades old.’ And let’s stop for a moment because that note, which was hidden in the system card for opus 4.6, is almost word for word what anthropic said about Mythos.”

While the core claim regarding Opus 4.6’s vulnerability detection capabilities holds true, the precise attribution and location of the quote require correction. The information was drawn from a report published by Anthropic on the same day as Opus 4.6’s release, which can accurately be described as "release notes" or "supplementary release notes," rather than a formal "system card."

Specifically, this report stated: "Opus 4.6 found high-severity vulnerabilities, some that had gone undetected for decades." In another section, it further detailed: "So far, we’ve found and validated more than 500 high-severity vulnerabilities." Both the title of the report and its concluding remarks indeed referred to these identified vulnerabilities as "0-day."

However, the specific phrasing, "over 500 exploitable zero-day vulnerabilities, some of which are decades old," does not appear verbatim within Anthropic’s official report. This particular summary was, in fact, derived from a tweet by a third-party observer, which accurately summarized the findings presented in the report. The original podcast wording inadvertently implied that this exact quote was directly from Anthropic’s published documentation, which was not the case.

This clarification is provided to ensure factual precision. We extend our gratitude to the AI researcher who brought these specific points to attention. Accuracy in reporting on complex technological advancements is paramount, and corrections are always welcomed and appreciated. Concerns or notes regarding factual content can be directed to [email protected].