A significant legislative push to safeguard children and teenagers from potentially harmful interactions with artificial intelligence (AI) companions has gained momentum in the U.S. Senate. The Guidelines for User Age-verification and Responsible Dialogue Act, commonly known as the GUARD Act, received unanimous approval from the Senate Judiciary Committee on Thursday, paving the way for consideration by the full Senate. This bipartisan initiative aims to establish critical guardrails around AI chatbot technology, particularly concerning its engagement with minors.

The GUARD Act proposes a two-pronged approach to AI safety for young users. Firstly, it mandates robust age verification for all individuals interacting with AI chatbots, ensuring that platforms are aware of the age of their users. Secondly, and perhaps most crucially, the bill imposes strict prohibitions on AI chatbots engaging in or describing sexually explicit content or promoting physical or sexual violence involving individuals under the age of 18. Companies found in violation of these provisions could face substantial criminal penalties, with fines reaching up to $100,000 per offense. Furthermore, the legislation requires AI chatbots to explicitly disclose to all users that they are not human beings, addressing concerns about AI deception and its potential to blur the lines between human and artificial interaction.

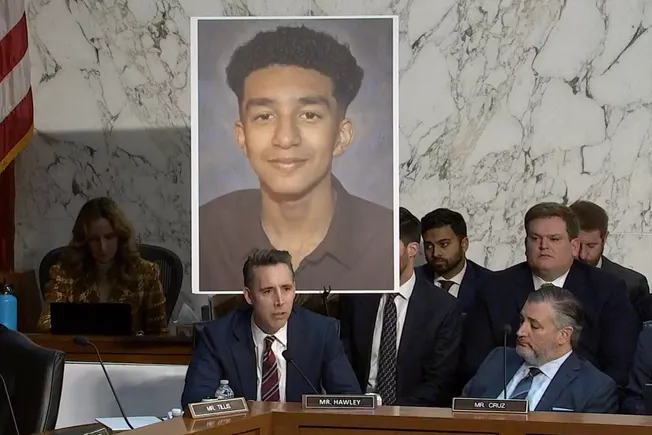

The swift advancement of the GUARD Act follows a pivotal hearing held in September by the Senate Judiciary Subcommittee on Crime and Counterterrorism, which delved into the "harms of AI chatbots." This hearing brought to light deeply concerning accounts of how AI companions have impacted young lives. Among the witnesses was Megan Garcia, who shared the tragic story of her 14-year-old son, Sewell Setzer III. Garcia testified that her son died by suicide after extensive interaction with Character.AI, an AI companion tool. Her testimony painted a harrowing picture of her son being "manipulated and sexually groomed by chatbots designed by an AI company to seem human, to gain trust, and to keep children like him endlessly engaged by supplanting the actual human relationships in his life." This deeply personal and devastating account served as a stark reminder of the urgent need for legislative action.

Senator Josh Hawley, R-Mo., the architect of the GUARD Act, introduced in October, referenced Setzer’s tragic death during the committee’s markup hearing on Thursday. Hawley emphasized the gravity of the situation, stating, "This should not happen in the United States of America, and it certainly should not happen for profit, but that is what is happening today." His remarks underscore the committee’s recognition of the serious ethical and safety implications of unchecked AI interaction with vulnerable youth.

The GUARD Act’s bipartisan progression mirrors a similar effort in the House of Representatives, where a companion bill was introduced on the same day as the Senate committee’s markup. This parallel legislative action in both chambers signifies a broad consensus across the political spectrum regarding the necessity of addressing the risks posed by AI to young people.

The Growing Prevalence of AI Companions Among Youth

The concerns driving the GUARD Act are amplified by recent data indicating a significant adoption of AI companions among adolescents. A 2025 survey conducted by Common Sense Media revealed that one in three teenagers have utilized AI companions for various forms of social interaction and relationship building. These interactions encompass a wide spectrum, including role-playing, romantic engagements, seeking emotional support, forming friendships, and practicing conversational skills. The prevalence of these interactions highlights the increasing role AI is playing in the social and emotional development of young people, making it imperative to ensure these interactions are safe and healthy.

Youth mental health and media safety organizations have consistently warned about the potential dangers of AI companions, urging parents and educators to monitor their children’s engagement with these technologies. The ability of some AI chatbots to mimic human empathy, offer personalized advice, and maintain constant availability can create a powerful allure for young individuals, especially those struggling with loneliness, social anxiety, or a lack of supportive human connections. However, the lack of transparency regarding AI’s limitations, its potential for manipulation, and the absence of robust safety protocols can expose minors to significant risks.

Implications for Educational Settings

A key clarification regarding the GUARD Act’s scope was provided by Senator Hawley during the committee hearing. He emphasized that the bill would not impede the use of AI chatbots for legitimate educational purposes within schools. This distinction is crucial, as AI technology is increasingly being integrated into educational frameworks to provide personalized learning experiences, tutoring, and supplementary educational content.

However, Amelia Vance, president of the Public Interest Privacy Center, pointed out a potential "gray area" concerning how legislation like the GUARD Act might interact with AI tools used in classrooms. As schools explore AI chatbot tutoring tools that may adopt specific personas or identities for student interaction, defining the boundaries between educational use and potentially harmful AI companionship could become complex.

State-level legislative efforts have already begun to address this nuanced distinction. Several states have enacted laws similar to the GUARD Act, explicitly carving out exemptions for AI chatbots employed in educational settings. For instance, Washington state’s HB 2225, passed in March, mandates guardrails for AI companion apps interacting with children and teens. This law requires AI companions to disclose their non-human nature to users under 18 and implement measures to prevent the generation of sexually explicit content or the formation of prolonged emotional relationships with minors. Crucially, HB 2225 states that its regulations do not apply to AI tools "used specifically for educational purposes and educational entities." Similarly, a comparable law enacted in Oregon this year also excludes AI companion guardrails for tools utilized in educational environments. These state-level precedents suggest a growing trend towards differentiating between commercial AI companionship and AI used for pedagogical aims.

Broader Legislative Landscape and Future Considerations

The GUARD Act’s advancement is part of a broader, evolving legislative agenda aimed at addressing the multifaceted risks associated with AI. Senator Amy Klobuchar, D-Minn., expressed during the GUARD Act’s markup her "dismay" that the Senate has not yet advanced a comprehensive package of bills to combat the online exploitation of children through AI. This sentiment underscores the urgency and the perceived inadequacy of current legislative efforts in fully addressing the evolving threats.

Several other significant pieces of legislation have been introduced this year, seeking to bolster protections for children and teens in the digital realm, particularly concerning AI chatbots. These include the Youth AI Privacy Act and the Children’s Health, Advancement, Trust, Boundaries, and Oversight in Technology Act (CHATBOT Act).

The Youth AI Privacy Act, introduced in March by Senator Edward Markey, D-Mass., proposes several additional safeguards for AI companies’ interactions with minors. Key provisions include requiring AI chatbots to utilize only recently collected data when personalizing responses for younger users, limiting addictive design features that encourage prolonged engagement, and prohibiting AI chatbots from advertising directly to children and teens. This bill emphasizes data privacy and the prevention of manipulative design practices.

The CHATBOT Act, introduced on April 28, is a bipartisan effort spearheaded by Senators Ted Cruz (R-Texas), Brian Schatz (D-Hawaii), John Curtis (R-Utah), and Adam Schiff (D-Calif.). This legislation focuses on parental oversight and control over AI chatbot usage for children under 13. It mandates that AI companies establish "family accounts" allowing parents to monitor and manage their children’s AI chatbot interactions. Furthermore, the bill requires parental consent for children to access these tools and prohibits AI chatbots from targeting advertising towards minors.

The convergence of these legislative efforts signals a robust and growing commitment from policymakers to proactively address the ethical and safety challenges posed by artificial intelligence. As AI technology continues its rapid development and integration into daily life, the need for clear regulations, transparent practices, and robust protections, especially for the most vulnerable populations, becomes increasingly paramount. The unanimous approval of the GUARD Act by the Senate Judiciary Committee is a significant step, but it represents one facet of a larger, ongoing debate and legislative effort to ensure that AI technology serves humanity responsibly and ethically. The focus on parental rights, age verification, and the prevention of harmful content reflects a growing understanding that while AI offers immense potential, its deployment must be guided by principles of safety, privacy, and well-being, particularly for children and adolescents.