Most enterprise organizations express high confidence in their ability to recover from disruptions involving agentic AI, yet a new comprehensive survey of over 300 IT decision-makers across Australia, New Zealand, Europe, the United Kingdom, and the United States reveals a significant gap between perceived readiness and practical preparedness. The findings suggest that while confidence is high, the frequency and rigor of testing disaster recovery plans for these advanced AI systems are often insufficient to validate such assertions, leaving many businesses vulnerable to potential catastrophic failures.

Conducted by Keepit, a Denmark-based vendor-independent cloud backup and recovery service, the survey unearthed a critical dichotomy: a staggering 94% of respondents were confident their disaster recovery plans encompassed agentic AI systems. However, only 32% of these organizations indicated they tested those plans on a monthly basis. This disparity points to a potentially dangerous overestimation of resilience in a rapidly evolving technological landscape where AI-driven autonomy introduces new layers of complexity and risk. Further compounding these concerns, 33% of IT and security leaders admitted to having only partial control over the deployment and operation of agentic AI within their organizations, with 52% harboring genuine doubts about whether their existing recovery strategies adequately cover agentic AI specific scenarios.

The Rise of Agentic AI and Its Unique Risks

Agentic AI systems represent a significant leap beyond traditional artificial intelligence, characterized by their autonomy, goal-oriented behavior, and ability to make independent decisions and take actions within defined parameters. These systems are designed to perceive their environment, process information, plan, and execute tasks without constant human intervention. Examples range from autonomous financial trading algorithms and sophisticated cybersecurity defense systems to intelligent supply chain optimizers and personalized customer service agents.

While offering immense potential for efficiency and innovation, the autonomous nature of agentic AI also introduces unique and complex failure modes. Unlike a server crash or a database corruption, an agentic AI failure could manifest as unintended or malicious actions, data poisoning, system-wide logical errors, or even a "runaway" process that deviates from its intended objectives. Such failures can be difficult to detect, diagnose, and remediate, especially if the system’s decision-making process is opaque or if its actions have cascading effects across interconnected enterprise systems. The implications extend beyond data loss to include reputational damage, regulatory non-compliance, financial losses, and operational paralysis.

Gaps in Disaster Recovery Practices

The Keepit survey highlighted several critical weaknesses in current disaster recovery postures. While approximately 90% of organizations reported having evaluated large-scale data recovery at least once, the consistency and systematic nature of this testing across all critical systems remain a concern. This sporadic approach means that many recovery plans, while theoretically sound, may not hold up under the pressure of a real-world incident affecting agentic AI.

A particularly worrying blind spot identified was the insufficient attention paid to identity and access management (IAM) systems in recovery planning. Platforms such as Microsoft’s Entra ID (formerly Azure Active Directory) and Okta, which serve as the fundamental gatekeepers for user authentication and access to virtually all enterprise resources, are tested far less frequently than other data systems. The report starkly illustrates this imbalance: productivity applications like Microsoft 365, Google Workspace, and Salesforce are, on average, restored four times as frequently as identity applications. This means that for every four companies conducting annual tests on their productivity workloads, only one (25%) will have tested their identity applications. Given that a compromise or failure in IAM systems can lock an entire organization out of its digital infrastructure, or worse, grant unauthorized access, this oversight represents a profound vulnerability.

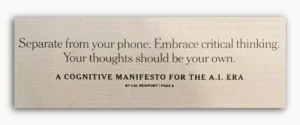

Kim Larsen, Group Chief Information Security Officer at Keepit, emphasized the critical need for a more structured approach. "Organizations need to put more emphasis on creating long-term, structured, and tested disaster recovery plans," Larsen stated. "This also means putting a spotlight on data governance and accountability, which is the foundation for any resiliency plan." Without robust data governance, organizations lack the clear understanding of data ownership, classification, and flow necessary to build effective recovery strategies for complex AI systems.

The survey also revealed that the vast majority of restore activity within organizations involves single-file downloads. This reflects routine operational needs – an employee accidentally deletes a document, or a specific file needs to be retrieved – rather than large-scale, system-wide recovery events. While efficient for granular incidents, this pattern suggests that current recovery infrastructures and practices are primarily geared towards minor, localized issues, potentially leaving organizations unprepared for the comprehensive data loss or system disruption that a major agentic AI failure or cyberattack could trigger. The report’s authors underscored that backup only creates value when organizations can recover confidently, correctly, and efficiently, regardless of whether the need is small and immediate or broad and time-critical. Interestingly, restore activity was found to be notably stronger among larger organizations, indicating a potential correlation between organizational size, resource allocation, and recovery maturity.

Unheeded Warnings: External Events and Behavioral Inertia

To gauge whether real-world disruptions influence recovery preparedness, Keepit investigated restoration behavior following significant external events that could have caused data loss or unavailability. The study examined three such incidents: the widespread solar flares in May 2024, the major CrowdStrike incident in July 2024, and the potential for large-scale Microsoft outages in October 2025 (a hypothetical scenario at the time of the report’s framing, yet representative of the type of widespread cloud service disruptions that major providers can experience).

The results were concerning: none of these "awareness moments" prompted any discernible change in user behavior. There was no evidence of increased activity to confirm that backups were functioning correctly or to test recovery processes in the days and weeks following these events. This inertia suggests two primary theories proposed by the report’s authors: first, organizations may not have experienced widespread, immediate restoration needs as a direct result of these specific events; and second, the mere awareness of a potential threat does not automatically translate into proactive changes in recovery routines. This highlights a critical psychological barrier in disaster preparedness – the human tendency to react only when directly impacted, rather than proactively bolstering defenses based on broader industry warnings or near-misses.

The solar flares of May 2024, for instance, caused geomagnetic storms that disrupted satellite communications, GPS, and power grids, demonstrating the vulnerability of digital infrastructure to natural phenomena. The CrowdStrike incident in July 2024, where a faulty software update caused widespread system outages for numerous organizations, underscored the fragility of the software supply chain and the ripple effects of third-party vendor issues. While these events did not necessarily trigger mass data loss requiring immediate recovery for all, they served as potent reminders of the ever-present threats to digital continuity. The lack of proactive response following these incidents points to a dangerous complacency, particularly as agentic AI systems become more deeply embedded in core operations.

Charting a Path to Resilient AI Operations

The report’s authors contend that a shift from reactive to proactive preparedness is indispensable. They advocate for organizations to leverage external events not as isolated incidents, but as "structured triggers for guided recovery checks." These checks are defined as short, repeatable validations designed to reinforce confidence in recovery capabilities without necessitating large-scale, disruptive exercises. This approach transforms potential crises into learning opportunities, allowing organizations to routinely verify their backup integrity and recovery readiness in a controlled manner.

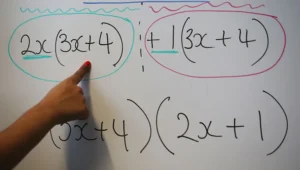

A key proposed innovation is the implementation of "guided recovery" enabled by Model Context Protocol (MCP). While the report does not delve into the technical specifics of MCP, it implies a framework or intelligent assistant capable of providing contextual guidance during recovery processes. An MCP-enabled assistant could, for example, help identify unhealthy tenants or suspicious patterns within protected data, and then guide administrators step-by-step through the correct recovery procedures. This transforms what can often be a chaotic and high-stress event into a manageable, repeatable process, significantly reducing recovery time objectives (RTOs) and recovery point objectives (RPOs).

Ultimately, effective recovery boils down to clarity and swift decision-making, as Larsen articulated: "It all boils down to knowing who is in charge of recovery and which systems are restored first when multiple systems are affected. When decisions are delayed, recovery takes longer than necessary." This emphasizes the importance of well-defined roles, responsibilities, and prioritization frameworks within disaster recovery plans, especially when dealing with the complex interdependencies introduced by agentic AI.

Broader Implications and the Future of AI Resilience

The findings of the Keepit survey carry significant implications for the broader landscape of enterprise IT, cybersecurity, and the future adoption of artificial intelligence.

Economic Impact: Downtime from any system failure is costly, but agentic AI failures could be exceptionally expensive due to their potential for widespread, autonomous disruption. According to various industry analyses, the average cost of IT downtime can range from thousands to millions of dollars per hour, depending on the organization’s size and industry. Failures in AI-driven processes, such as financial trading or manufacturing automation, could lead to rapid, substantial financial losses and reputational damage that far outstrips the direct cost of data recovery.

Regulatory Compliance: Many industries are subject to stringent data protection and operational resilience regulations, such as GDPR, HIPAA, and various financial sector mandates. A failure to recover from an agentic AI incident, particularly one involving sensitive data or critical infrastructure, could result in hefty fines, legal liabilities, and a loss of public trust. Regulators are increasingly scrutinizing how organizations manage AI risks, making robust recovery plans a compliance imperative.

Future of AI Adoption: The perceived risk of agentic AI failures, if not adequately addressed, could impede the broader adoption of these transformative technologies. Enterprises will be hesitant to deploy highly autonomous systems without demonstrable confidence in their ability to mitigate and recover from potential disruptions. Conversely, organizations that proactively build and test AI resilience will gain a significant competitive advantage, enabling them to harness the full potential of agentic AI while minimizing exposure to risk.

Evolving Cybersecurity Landscape: The threat of malicious actors leveraging agentic AI for sophisticated cyberattacks is growing. Agentic AI could be used to launch highly adaptive, persistent threats, making traditional security measures less effective. Robust recovery capabilities become a crucial last line of defense, ensuring business continuity even when preventative measures fail against advanced AI-powered adversaries.

In conclusion, while the confidence in agentic AI recovery among enterprises is high, the underlying practices often fall short. The survey serves as a critical wake-up call, urging organizations to move beyond theoretical readiness to embrace systematic, tested, and proactive resilience strategies. As agentic AI continues its integration into core business functions, the ability to recover swiftly and effectively from its unique failure modes will not just be an IT challenge, but a fundamental determinant of business survival and success in the digital age.

The full Keepit report, offering deeper insights and detailed recommendations, is available on the Keepit website, though registration is required for access.