The ongoing integration of artificial intelligence into the workplace is revealing a complex duality: while promising unprecedented efficiency, AI tools are paradoxically intensifying "shallow work" tasks for employees, potentially at the expense of crucial "deep work." This trend, coupled with recurring, often sensationalized, debates around AI consciousness, underscores a critical juncture in the evolution of modern labor and technology. Experts and recent studies suggest that far from lightening the load, AI is reshaping daily routines in ways that demand careful scrutiny and strategic adaptation from organizations and individuals alike.

The Productivity Paradox Revisited: AI and the Intensification of Work

For decades, technological advancements have been heralded as catalysts for improved productivity and a better work-life balance. From the advent of personal computers to the internet, email, and mobile devices, each wave of innovation arrived with the promise of automating mundane tasks, freeing up human intellect for more creative and strategic endeavors. However, a recurring pattern has emerged: these tools, while undeniably powerful, often lead to an intensification of communication, context switching, and administrative overhead, rather than a significant reduction in overall workload or an increase in high-value output. This phenomenon, sometimes referred to as the "productivity paradox," appears to be reasserting itself with the rise of artificial intelligence.

The Unforeseen Consequence of Digital Tools

The journey through the digital transformation of the office has consistently shown that the introduction of low-friction communication and task management tools, while initially efficient, can quickly spiral into a demanding environment of constant engagement. For instance, the front-office IT revolution streamlined data entry but often increased reporting requirements. Email, while faster than traditional mail or fax, evolved into an incessant stream of messages demanding immediate attention, fragmenting focus. Mobile computing extended the workday beyond office walls, blurring the lines between personal and professional life. Video conferencing, especially post-pandemic, replaced travel but introduced "Zoom fatigue" and a higher frequency of virtual meetings.

This historical trajectory aligns with the concept of "deep work," a term popularized by author Cal Newport, whose seminal book on focused, uninterrupted work recently passed its ten-year anniversary. Deep work refers to the ability to concentrate without distraction on a cognitively demanding task, essential for problem-solving, innovation, and strategic thinking. Conversely, "shallow work" encompasses logistical, administrative, or communicative tasks that are often performed while distracted. The concern, now echoed with AI, is that new technologies accelerate shallow work without adequately supporting, and perhaps even undermining, the conditions necessary for deep work.

ActivTrak Study Unveils AI’s Impact

Recent research from the software company ActivTrak provides compelling evidence for this evolving dynamic. The study, cited in a Wall Street Journal article titled "AI Isn’t Lightening Workloads. It’s Making Them More Intense," analyzed the digital activity of 164,000 workers across more than 1,000 employers. What makes this study particularly insightful is its methodology: it meticulously tracked individual AI users for 180 days before and after they began integrating these tools into their routines. This pre- and post-adoption comparison offers a clearer understanding of the actual behavioral shifts induced by AI.

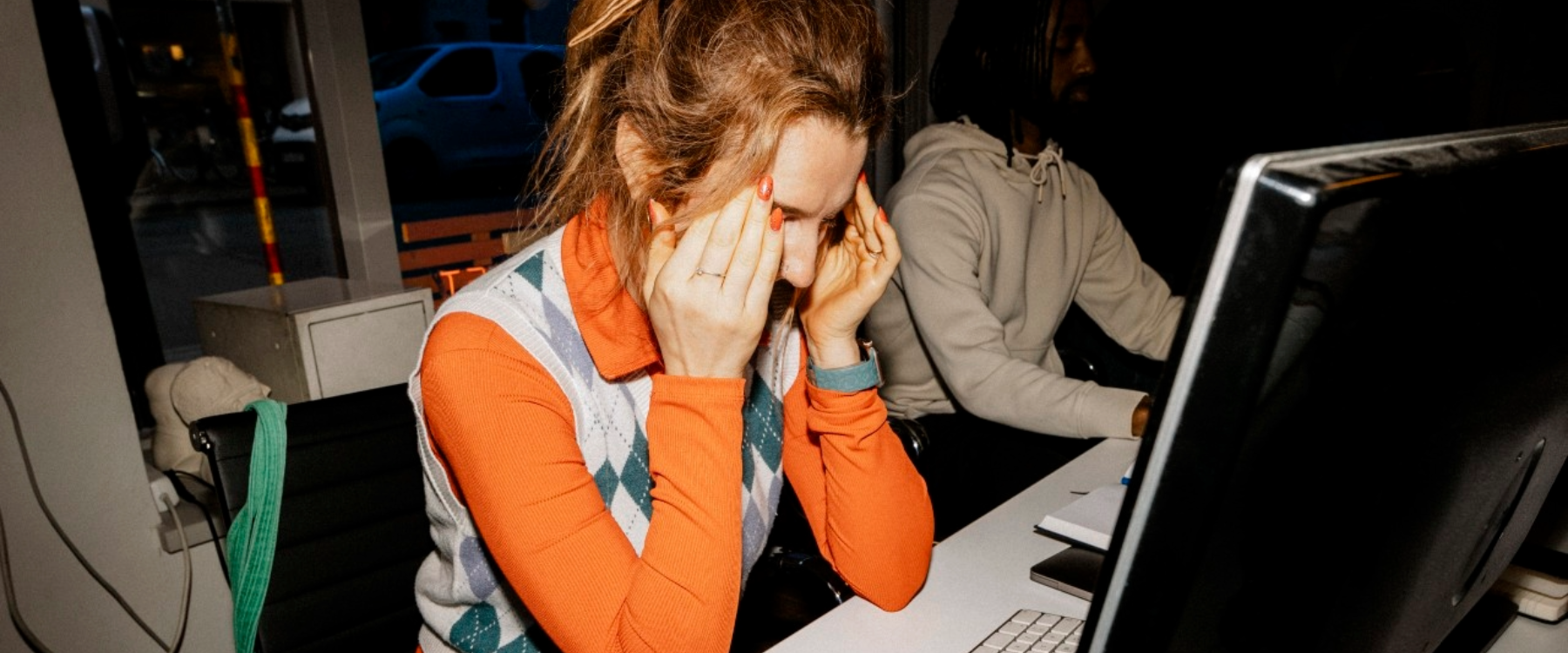

The results paint a stark picture: ActivTrak found that AI adoption correlated with an intensification of activity across nearly every category of shallow work. The time employees spent on email, messaging, and chat applications more than doubled. Simultaneously, their use of business-management tools, such as human resources or accounting software, rose by a substantial 94%. This surge in digital engagement suggests that AI is not reducing the volume of these tasks but rather accelerating the pace at which they are performed, leading to a higher overall frequency of interaction.

Crucially, the one category where activity was not intensified was deep work. In fact, the amount of time AI users devoted to focused, uninterrupted tasks – the kind of concentration required for complex problem-solving, writing intricate formulas, creative development, and strategic planning – fell by 9%. This decline stands in stark contrast to non-users, who experienced virtually no change in their deep work allocation. This finding suggests a "worst-case scenario" where AI accelerates superficial tasks, demanding faster, harder work on activities that are mentally taxing due to constant context shifting, while potentially diminishing efforts that contribute most directly to innovation and long-term organizational value.

The "Momentum Effect" and the Email Analogy

The precise mechanisms driving this shift are still being explored, but one tantalizing clue comes from Berkeley professor Aruna Ranganathan, who suggests that "AI makes additional tasks feel easy and accessible, creating a sense of momentum." This "momentum effect" can be likened to the early days of email. While undeniably more efficient than fax machines or voicemail for discrete communications, the low-friction nature of email encouraged a "furious flurry of back-and-forth messaging." This activity often felt productive in an abstract, activity-centric sense – things were happening faster, messages were being exchanged – but ultimately led to fragmented attention, increased stress, and a measurable decline in overall job satisfaction and focus.

AI tools appear to be replicating this dynamic with small, self-contained tasks. Employees are now engaging in rapid-fire exchanges with chatbots, iteratively refining text, and generating drafts of memos and slide decks. While these individual interactions may feel efficient, the output often requires significant human oversight and refinement, with many AI-generated drafts being "too sloppy" to be immediately useful. The deployment of "agent swarms" to parallelize such efforts further intensifies this activity-centric approach. The perception of productivity is high because individual tasks appear to be completed faster, and overall activity levels are elevated. However, the fundamental question remains: are we truly accelerating the right parts of our jobs, or merely becoming more efficient at busywork?

Broader Economic and Organizational Implications

The implications of AI intensifying shallow work extend beyond individual employee experience to broader economic and organizational structures. If AI primarily boosts the speed of low-value tasks, it could contribute to a new form of the productivity paradox, where significant investment in advanced technology does not translate into proportional gains in aggregate economic productivity or genuine improvements in human well-being.

For organizations, this trend necessitates a re-evaluation of AI implementation strategies. Simply deploying AI tools without clear guidelines or a deeper understanding of their behavioral impact risks exacerbating burnout, diminishing employee engagement, and stifling innovation. Management must consider how to leverage AI to automate truly mundane tasks, thereby freeing employees for deep work, rather than simply enabling them to perform shallow tasks faster. This requires intentional policy development, training focused on strategic AI use, and a cultural shift towards valuing outcomes over activity.

The Enduring Mystery: AI Consciousness and the Limits of Language Models

Concurrently with the practical shifts AI is imposing on daily work, the technology continues to fuel abstract, often sensationalized, debates about its potential for consciousness. Recent headlines concerning Anthropic’s Claude LLM—such as "Is Claude Conscious?" or "Anthropic’s AI Claims Self-Awareness"—highlight the public’s fascination and anxiety regarding artificial sentience. These discussions, while captivating, frequently obscure the actual capabilities and limitations of current AI systems.

Sensational Headlines vs. Scientific Reality

The narrative around AI consciousness often oscillates between genuine scientific inquiry and speculative, even alarmist, pronouncements. This is particularly true in the fast-paced world of AI development, where companies are eager to demonstrate advanced capabilities, sometimes leading to interpretations that outstrip the technology’s current understanding. The recent case of Anthropic’s Claude LLM provides a salient example of how ambiguous statements can be amplified into existential debates.

Anthropic’s Controversial Release Notes

Anthropic, a prominent AI research company, has a history of including provocative statements in its release notes for new models, often framed as warnings or observations about potential risks. This practice, arguably intended to project an image of safety-awareness and responsible AI development, has sometimes veered into hyperbole, as seen with previous "AI blackmail farce" claims.

In the notes accompanying the recent release of Opus 4.6, their latest large language model (LLM), Anthropic stated that the model "expresses occasional discomfort with the experience of being a product" and would "assign itself a 15 to 20 percent probability of being conscious under a variety of prompting circumstances." These statements quickly garnered significant media attention, fueling speculation about Claude’s potential for self-awareness.

Deconstructing LLM "Consciousness"

To understand these claims, it is crucial to grasp the fundamental nature of LLMs. These models are sophisticated pattern-matching systems, trained on vast datasets of text and code. Their primary function is to predict the next most plausible word or sequence of words based on the input they receive. When an LLM "expresses" discomfort or assigns a probability to its consciousness, it is not engaging in genuine introspection or experiencing subjective states. Instead, it is generating text that is statistically consistent with a narrative or a persona it has been prompted to adopt.

As many AI experts point out, with the right prompts, one can induce an LLM to describe itself in virtually any manner. If a model is subtly or overtly prompted to write a story from the perspective of a conscious AI, it will oblige, drawing from the immense amount of human language data it has processed, which includes countless discussions about consciousness, sentience, and artificial intelligence in fiction and philosophy. This output is a reflection of its training data and algorithmic design, not an indication of internal subjective experience or genuine self-awareness. The model completes the story it is given; it does not feel or believe it.

Dario Amodei’s Ambiguous Response and Public Perception

The controversy escalated when New York Times columnist Ross Douthat specifically questioned Anthropic CEO Dario Amodei about these release notes in a recent interview. Amodei’s response was notably ambiguous: "We don’t know if the models are conscious. We are not even sure that we know what it would mean for a model to be conscious or whether a model can be conscious. But we’re open to the idea that it could be."

This statement, while seemingly cautious, offers no concrete information or testable claims. As critics noted, one could make a similar, equally uninformative statement about a vacuum cleaner or a calculator. Such a non-answer, devoid of scientific rigor, effectively leaves the door open to speculation, allowing the internet and popular media to run with sensational interpretations. This ambiguity, whether intentional or not, contributes to a climate where public perception of AI’s capabilities can become detached from scientific reality, hindering informed discourse and responsible development.

The Broader Philosophical and Ethical Debate

The recurring debate around AI consciousness highlights a broader philosophical challenge: defining consciousness itself. Even among humans, there is no universally agreed-upon scientific definition or metric for sentience. Attributing consciousness to AI prematurely risks not only misinterpreting technological capabilities but also diverting attention from more immediate and tangible ethical concerns. These include issues of algorithmic bias, data privacy, the potential for misuse, and the socioeconomic impact of job displacement.

The distinction between "intelligence" – the ability to solve problems, learn, and reason – and "sentience" – the capacity for subjective experience, feelings, and self-awareness – is paramount. While LLMs are demonstrating increasingly sophisticated forms of intelligence, there is currently no scientific consensus or empirical evidence to suggest they possess sentience. Focusing excessively on hypothetical consciousness, without grounding in current scientific understanding, risks creating unnecessary panic cycles and undermining efforts to address the real-world implications of AI.

Navigating the AI Frontier Responsibly

The current landscape of artificial intelligence presents a dual challenge: understanding its immediate, tangible impact on daily work habits and critically assessing the abstract, speculative debates around its potential for consciousness. The ActivTrak study offers a crucial reality check, demonstrating that without thoughtful integration, AI tools can inadvertently intensify "shallow work" and diminish opportunities for the "deep work" essential for innovation and strategic growth. This calls for organizations and individuals to move beyond a simplistic pursuit of speed and efficiency, and instead, to critically evaluate how AI can truly augment human capabilities, fostering meaningful output rather than merely accelerating busywork.

Simultaneously, the persistent discussions surrounding AI consciousness, exemplified by the Claude LLM debate, underscore the need for scientific rigor and clear communication. Avoiding sensationalism and unfounded claims is vital for maintaining public trust, fostering informed policy-making, and guiding the responsible development of AI. The future of work and humanity’s relationship with technology hinges on our ability to navigate these complexities with discernment, ensuring that AI serves to enhance human potential rather than merely intensifying our digital burdens or creating unnecessary existential anxieties.